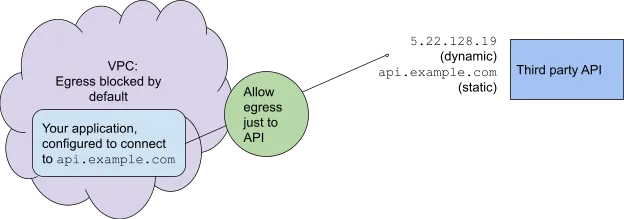

When you build a secure application, you often deny it permission to connect out of its Virtual Private Cloud (VPC). But sometimes you need to open up a little, for example, if your application needs to reach a third-party API.

Gateway to Domain, Lovecraft-style. Credit: StableDiffusion

The usual way is to allow egress to just the relevant IP addresses, as configured with the Firewall on the Google Cloud Platform, or with Network ACLs on AWS. But IP addresses can change over time, while the Fully Qualified Domain Name (FQDN), say api.example.com, provides a stable publicly-known endpoint. Most applications are configured to access a FQDN rather than an IP address.

In this article, I will review ways to permit egress only to a specific FQDN, with the advantages and disadvantages of each approach.

We will look for options that are inexpensive, easy to maintain, robust, and simple.

We will also check into options with the extra feature of Kubernetes-awareness: For example, if your application runs in Kubernetes, you may want to allow egress from a certain Kubernetes namespace and not others.

We may also want granularity of control on the level of domains, since a single IP address can also front multiple domains. Most of the solutions here do not account for that — if you allow access to a domain with a given IP address, they will allow egress to all the domains behind that address. This is often not a security problem, as usually all domains at a single IP address are controlled by one entity. But name-based virtual hosting does allow for this scenario, so we will present some solutions that support this.

The options I review here are:

- Squid, as an example of a self-managed proxy

- AWS Network Gateway, with deep packet inspection, which allows control to the level of the domain, even with virtual hosting.

- A new feature in Google Firewall, which gives FQDN control with the convenience of a serverless service

- The new Google Secure Web Gateway, which adds granular control down to the level of the domain and even the URL.

- Kubernetes-aware solutions: Cilium and Istio.

(A note on OSI Layers: Because domain names and DNS are at the “application layer” of the OSI network model, Layer 7, FQDN Egress Control is sometimes called “Layer 7 Egress Control.” In contrast, Layer 3 is where IP addresses are defined and is controlled in typical firewalls or Network ACLs.)

Implementing it yourself

First, let’s consider how we might implement this ourselves. Not that I recommend that, but it can clarify what these solutions are doing under the hood.

Even though the application is configured with the FQDN of the third-party API, your solution needs to block packets on the level of the IP address, since all network traffic uses these. The domain names are only relevant before the connection is attempted, when the client looks up the IP address based on the domain name.

Note also that a single FQDN can correspond to multiple IP addresses. This is not a problem as a normal DNS lookup will return these, and so you can allow access to a list of IP addresses.

You have a Firewall or Network ACL that blocks all traffic but the relevant IP addresses.

You write and deploy an application that checks DNS periodically to find the current IP address for the API’s FQDN, api.example.com. (A good choice for running this at low cost is GCP Cloud Functions or AWS Lambda on a periodic trigger.) In the rare cases that the IP address has changed. Your application then updates the Firewall or Network ACL.

Self-managed reverse web proxies

One “classic” solution to FQDN Egress Control is a reverse web proxy, where Squid is the best-known open-source solution. You use the routing service of your cloud to direct all outbound traffic through a VM that runs the Squid proxy; this then checks that the IP address checks the domain name — as set in an ACL whitelist — and proxies it forward if there is a match.

Squid is available in the AWS Marketplace; see this architecture discussion. It is also available in the GCP Marketplace; see this networking setup. See also this discussion of FQDN egress control in Squid and other proxies, like DiscrimiNAT and Aviatrix.

Proxies like Squid running on a VM carry a maintenance overhead, for example in upgrading VM operating systems and dealing with crashes. Because the entire flow of network traffic passes through one VM (unless you go to the additional effort of setting up a load-balanced deployment), the load can be heavy, which endangers robustness and may require the expense of a larger VM running 24x7, even when not fully needed.

AWS Network Firewall

AWS Network Firewall does deep packet inspection and so gains more filtering power. This means that it is not strictly comparable to Google Firewall, which more closely resembles AWS Network ACLs.

AWS Network Firewall supports FQDN Egress Control using stateful domain list rule groups. Using the Server Name Indicator (SNI) sent in negotiating a TCP connection for HTTPS traffic, it can distinguish between domains in virtual hosting scenarios.

Network Firewall can be integrated with Route 53 DNS Firewall, which blocks DNS resolution attempts, so that, for example, a DNS query for api.example.com from an application inside the VPC does not resolve to an IP address. But the DNS Firewall does not actually prevent access to that IP address; this is provided by Network Firewall.

Network Firewall is a good choice, but can get expensive: it is intended for complex multi-network enterprise environments. (See my article at the DoiT Blog comparing the use cases for the many Firewall-like services on AWS.)

Google Firewall and Web Gateway

It’s easier to use a solution that is fully managed by the cloud provider, rather than managing the VM yourself. And Google is just now coming out with some solutions to make this happen. In a follow-up post, I’ll go into detail about how to use them, but here are the highlights for now.

Google Firewall has a new FQDN Objects feature, now in limited Preview. It uses Cloud DNS every 30 seconds to look up the current IP address for the outside service.

Yet another service in limited Preview, Secure Web Gateway, also allows control on the domain level. To do this, you give it access to your SSL certificates in the GCP Certificate Manager so it can decrypt/encrypt your HTTPS traffic. This deep access gives it the power to control egress on the level of the domain, even in virtual hosting scenarios. Because it sees the full HTTP request, it even allows you control on the level of the URL.

Cilium

The above solutions work on the level of the VPC. But if your application runs on Kubernetes, you might want the egress control to work with Kubernetes concepts. For this, you can introduce CiliumNetworkPolicy. This is a Custom Resource Definition (CRD) that runs the logic of resolving domain names to IP addresses, and then blocks or allows traffic on the eBPF-based Cilium network layer. (See two posts at the DoiT blog.) The CRD is Kubernetes-aware, so that you can distinguish pods by namespace: Some that are allowed access to the external API, some that are not. Another CRD, CiliumClusterwideNetworkPolicy. does the same, but its configuration applies cross-namespace, to the entire cluster.

This solution adds some complexity to your cluster, because of the needs for the additional Cilium network layer. At some time soon, as stated in Cilium docs, “all of the functionality will be merged into the standard resource format and this CRD will no longer be required.” Though there is no standard specification yet available, the Kubernetes Networking Special Interest Group is now working on it.

Istio

The most featureful solution is provided by the Istio service mesh. Istio fully controls traffic, allowing you to block all egress, except where defined in a ServiceEntry. When you specify resolution: DNS, you ask Istio not to rely on the IP address that the client (a pod) is connecting to, but rather to periodically resolve the domain name using DNS. The Istio-service can be exposed to just those namespaces that you choose. Istio gives you the greatest control, but it adds more complexity than Cilium, with the full power and functionality of a service mesh layer.

What to do?

You have a range of options: The battle-tested Squid (or another reverse web proxy); the new managed services, Google Firewall FQDN Objects, and AWS Network Firewall; the HTTP-proxying Secure Web Gateway; and in addition, the Kubernetes-aware Cilium and Istio.

If you want a stable, managed solution, my recommendation is to go with the new Google Firewall (once it matures) and AWS Network Firewall (if the pricing is in your budget). If you need control on the level of Kubernetes namespaces, the simplest solution is a Cilium CRD; when the Kubernetes-native standard comes out, go with that.