When embarking on any transformation, it helps to have a plan. One of the biggest challenges in any enterprise with large initiatives is where to begin, who to involve, and who to work with to make it successful. Whether structured in a divisional, matrix, flat, or other organizational structure, by setting aggressive goals and kicking off proofs-of-concept (PoCs) early and often, you will be moving in the right direction.

This article is an opinionated approach on how to structure your cloud infrastructure. It’s based on recent experience leading enterprise cloud transformations for FinTech (regulated) organizations with SaaS products and re-architecting legacy applications to run upon Google’s managed Kubernetes service, GKE. The goal is to highlight areas to plan and design for from network, budget, DevOps, security, and compliance. In future articles, I will dive deeper into many of these topics with specific examples and/or demos.

TL;DR

- Make Sure You Have Organizational Alignment

- Choose Your Own Adventure

- Set Up Identity With User Groups / Roles

- Centralize Your Networks With Shared VPCs

- Plan Out All Of Your Resources

- Tag Your Resources For Better Reporting

- Set Up Budgets Alerts

- Organize Your Source Code Repositories For IaC

- Set Up Security / Monitoring Alerts

- Use a Bastion (Jump) Host

- Protect Your Data

- Use Cloud Security Command Center

- Document And Test Your Disaster Recovery & Business Continuity Plans

- Partner With Experts Who’ve Done It Before

Make Sure You Have Organizational Alignment

Although this article focuses on Google Cloud Platform (GCP) features, I’d be remiss if I didn’t give a shout out to AWS’ CEO, Andy Jassy, for elegantly summarizing four keys to cloud transformation success, and dare I say any initiative success:

- Senior leadership team conviction and alignment

- Top-down aggressive goals

- Train your builders

- Don’t let [analysis] paralysis stop you before you start

My personal mantra when trying new things is “first make it work, then make it right, then make it fast”.

Choose Your Own Adventure

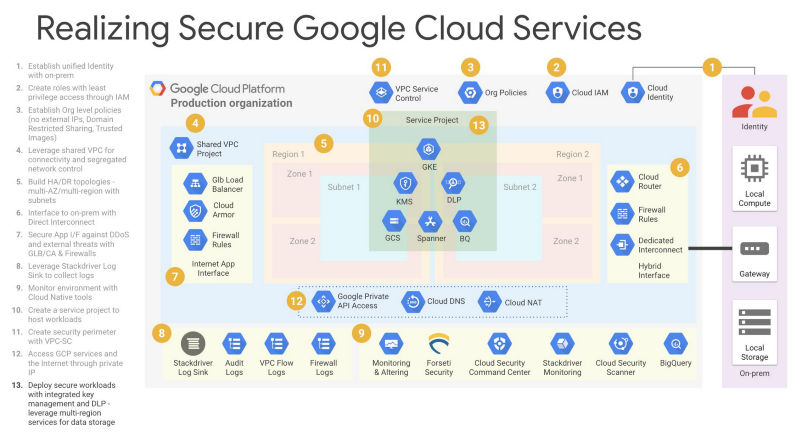

Google has great resources online about enterprise best practices, and this illustration below with recommended steps for Secure Google Cloud Services. Each organization’s needs varies so this roadmap is a great starting point to plan out your specific infrastructure or knowing what’s available.

Set Up Identity With User Groups / Roles

You should begin with a least-privilege policy and immediately disable project-creation ability at the organization level. Only DevOps, Billing, and OrgAdmins should have this ability, and ideally just a service account in DevOps for your infrastructure automation (i.e. Terraform). Wherever possible, do not add individuals to your IAM.

- Organization Administrators ([email protected])

- Network Administrators ([email protected])

- Security Administrators ([email protected])

- Billing Administrators ([email protected])

- DevOps ([email protected])

- Development ([email protected])

- DataScience ([email protected]) [optional]

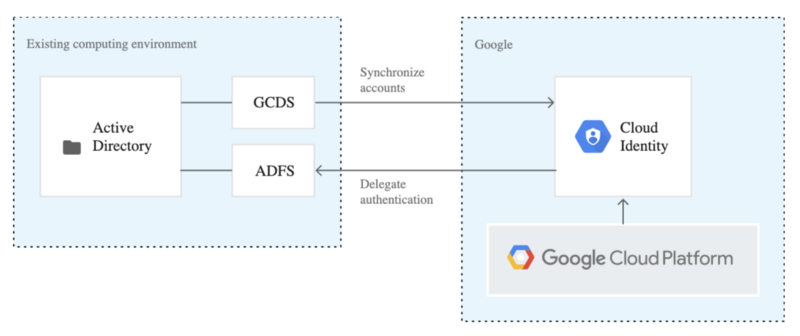

Once you have created these groups, optionally with Cloud Identity (connect to your existing AD or IdP) or in your GSuite Organization, then add at least one person per group. You then add necessary roles only as needed for each group respectively either at the Organization or Folder level.

If you adhere to GitOps, development teams can deploy any workloads* to their respective Kubernetes cluster namespaces via CI/CD pipelines from source control. This approach helps you manage access and quotas for easier cloud cost control and budgeting.

*only approved binaries from a trusted private image repository

Centralize Your Networks With Shared VPCs

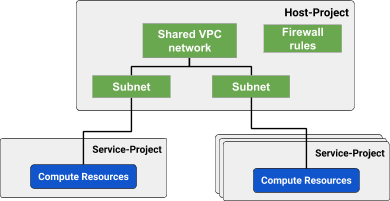

In highly-regulated industries or when maintaining compliance like SOC 2, your controls may demand strict separation of responsibilities. One common enterprise practice is to segregate management of the network from DevOps and application development. GCP makes this easy with its shared VPC and elegant project hierarchy features namely host and service projects.

Design and plan your network to avoid collisions and delete all default networks within projects — you’ll thank me later!

As noted, this is an opinionated approach but yours may vary. My recommended best practice is to encapsulate your service projects by a host project and centralize your network configuration in one place, granting only your network administrators group access to these projects.

When allocating IPs for your subnets, depending on your scale and growth plans, a /16 CIDR range for a VPC should be plenty (65,000 addresses). Kubernetes, however, demands a large number of IPs for services and pods as they are dynamically assigned IPs for ephemeral containers. I would suggest blocking out an extra unused range for secondary IPs like below:

- subnet within VPC /16 range: k8s-nodes-prod (10.10.0.0/22)

secondary: k8s-services-prod (10.80.64.0/22)

secondary: k8s-pods-prod (10.80.0.0/18)

subnet: bastion-prod (10.10.64.0/29) - subnet within VPC /16 range: k8s-nodes-stage (10.11.0.0/22)

secondary: k8s-services-stage (10.81.64.0/22)

secondary: k8s-pods-stage (10.81.0.0/18)

subnet: bastion-stage (10.11.64.0/29) - subnet within VPC /16 range: k8s-nodes-demo (10.12.0.0/22)

secondary: k8s-services-demo (10.82.64.0/22)

secondary: k8s-pods-demo (10.82.0.0/18)

subnet: bastion-demo (10.12.64.0/29) - subnet within VPC /16 range: k8s-nodes-qa (10.13.0.0/22)

secondary: k8s-services-qa (10.83.64.0/22)

secondary: k8s-pods-qa (10.83.0.0/18)

subnet: bastion-qa (10.13.64.0/29) - subnet within VPC /16 range: k8s-nodes-dev (10.14.0.0/22)

secondary: k8s-services-dev (10.84.64.0/22)

secondary: k8s-pods-dev (10.84.0.0/18)

subnet: bastion-dev (10.14.64.0/29)

This allows ample space within your VPC for additional subnets for database servers or other resources.

Plan Out All Of Your Resources

Google Cloud Platform organizes resources by organization, folder, and project. This makes it easier to maintain users and groups without multi-account madness on other platforms. Many enterprises that adopted cloud early are stuck building custom solutions to maintain this “mess” of accounts and permissions which is an InfoSec and compliance nightmare — GCP got it right.

├── DevOps

│ └── project-devops

│ ├── bucket-terraform-state

│ ├── cluster-devops

│ │ ├── namespace-cicd

│ │ └── namespace-vault

│ ├── sink-application-logs

│ └── sink-audit-logs

├── RnD

│ ├── Non-Production

│ │ └── project-shared-network-nonprod

│ │ ├── sink-application-logs

│ │ ├── sink-audit-logs

│ │ ├── vpc-demo-10-12-0-0

│ │ │ └── project-demo

│ │ │ ├── cluster-demo

│ │ │ │ ├── namespace-team1

│ │ │ │ ├── namespace-team2

│ │ │ │ └── namespace-vault

│ │ │ ├── sink-application-logs

│ │ │ └── sink-audit-logs

│ │ ├── vpc-dev-10-14-0-0

│ │ │ └── project-development

│ │ │ ├── cluster-development

│ │ │ │ ├── namespace-team1

│ │ │ │ ├── namespace-team2

│ │ │ │ └── namespace-vault

│ │ │ ├── sink-application-logs

│ │ │ └── sink-audit-logs

│ │ └── vpc-qa-10-13-0-0

│ │ └── project-development

│ │ └── cluster-qa

│ │ ├── namespace-team1

│ │ ├── namespace-team2

│ │ └── namespace-vault

│ ├── Production

│ │ └── project-shared-network-prod

│ │ ├── sink-application-logs

│ │ ├── sink-audit-logs

│ │ ├── vpc-prod-10-10-0-0

│ │ │ └── project-production

│ │ │ ├── cluster-production

│ │ │ │ ├── namespace-team1

│ │ │ │ ├── namespace-team2

│ │ │ │ └── namespace-vault

│ │ │ ├── sink-application-logs

│ │ │ └── sink-audit-logs

│ │ └── vpc-stage-10-11-0-0

│ │ └── project-stage

│ │ ├── cluster-stage

│ │ │ ├── namespace-team1

│ │ │ ├── namespace-team2

│ │ │ └── namespace-vault

│ │ ├── sink-application-logs

│ │ └── sink-audit-logs

│ └── project-monitoring

│ ├── bucket-development-logs

│ ├── bucket-production-logs

│ ├── workspace-development

│ └── workspace-production

└── Security

└── project-security

└── bucket-audit-logs

Tag Your Resources For Better Reporting

One of the most common challenges and sources of confusion with public clouds is billing, reporting, and cost control. One way to manage your costs is by designing your infrastructure with team-based namespaces as I’ve highlighted above. This speeds up developer onboarding and productivity, preventing a steep learning curve or smaller talent pool. Another best practice is to tag/label resources so you can establish alerts, budgets, and reports later.

Another colleague of mine wrote this article about “Auto Tagging Google Cloud Resources” using our open-source tool, IRIS. There are many other great articles on the subject so be sure to plan your tags in advance and minimize headaches later.

Suggested tags per resource:

- Owner — who currently is responsible (may have shifted teams)

- Creator — who created it originally (might have questions later)

- Project — i.e. my-company-production for better grouping

- Working Hours — save up to 60% by auto-scheduling dev resources with Zorya

Set Up Budget Alerts

Plenty of CFOs are afraid of the cloud because of the lack of visibility and the chance of endless spend due to the wrong configuration. Using budget alert you can track the daily spend and react fast to align your cloud spend with the company income. It is a good idea to provide cost information to your teams and require the team leaders to meet and monitor the budget accordingly.

Organize Your Source Code Repositories For IaC

Once you have your plan for your resource hierarchy, you are faced with a chicken/egg scenario. Do you provision everything with Terraform and if so, how does Terraform gain the permissions to build out everything. The suggestion is your Org Admin creates the DevOps folder and project, and your DevOps team creates a Terraform Service Account with necessary permissions to create/delete projects, etc. Start with the bare minimum and as you have errors, add the necessary roles as needed.

One of my colleagues wrote a great article on “Refactoring Terraform The Right Way” that aligns with the suggestions from the expert team at Gruntwork.io, creators of Terragrunt. In short, separate out your resources, services, and live environments into three separate repositories and manage group permissions with your source control accordingly.

Set Up Security / Monitoring Alerts

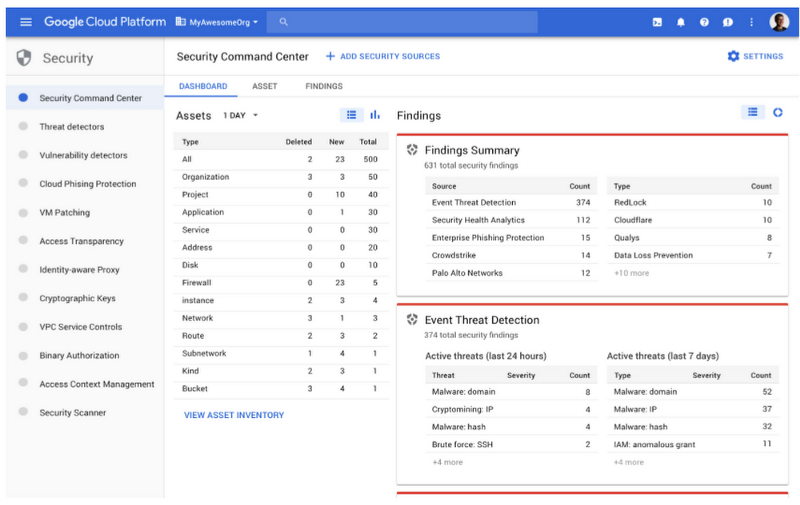

Monitoring and alerting should be at the forefront of your planning process and thankfully there are many built-in features in Google Cloud Platform that make this easy. The CIS benchmark scanning in the Cloud Security Command Center (CSCC) below will detect missing monitoring and provide step-by-step instructions on how to properly configure every project. See more information about CSCC below.

For convenience, here is a Gist of the recommended monitoring configurations.

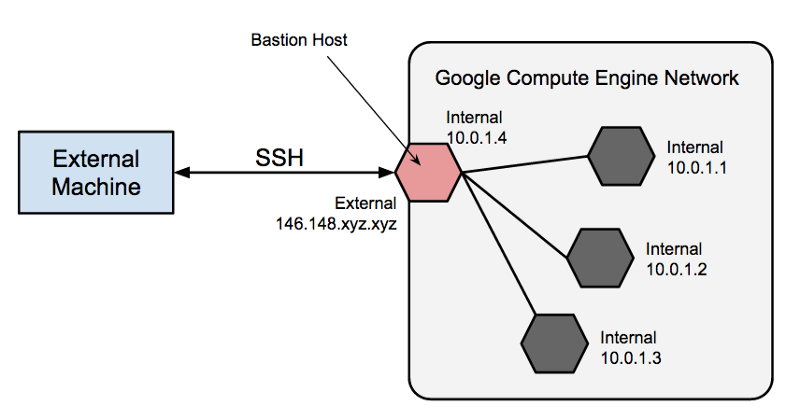

Use A Bastion (Jump) Host For Access

I omitted the VMs for bastion hosts in the above diagram for simplicity, but the best practice for secure access is to use them. I suggest you add a subnet range per project for a bastion host VM and eliminate all external IPs and configure private clusters. Create a managed instance group of one so GCP will bring up a new instance if it ever fails. You can either connect to the bastion via VPN, or the modern and more secure approach is using Cloud Identity Aware Proxy (IAP). You can further lockdown your bastion by restricting user SSH keys.

There are a variety of articles on Kubernetes Best Practices but this article from Google’s engineers on “Completely Private GKE Clusters With No Internet Connectivity” is a good reference and reaffirms the basic structure proposed above.

Protect Your Data

You need to plan and design your infrastructure to meet the policies defined by your organization’s security team, and often this includes varying degrees of encryption. GCP resources are encrypted at rest by default, but you should identify your encryption key needs (Google-managed, self-managed, BYOK) for at rest and in transit.

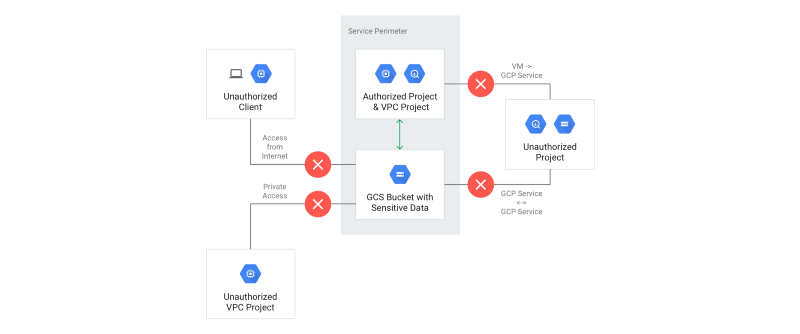

You may also want to isolate access to data within your organization, down to individuals or applications. You can accomplish this with VPC Service Controls as illustrated below.

If you are shipping logs or data for monitoring as illustrated in the resource hierarchy example, you may want to configure your log sinks to leverage the Cloud Data Loss Prevention (Cloud DLP) APIs to strip out nearly 100 types of PII data before storing.

Use Cloud Security Command Center

A hidden gem with GCP is the Cloud Security Command Center. This product provides a single pane of glass for asset management, threat and anomaly detection, WAF, and vulnerability scanning. One of the coolest features is the scanning, in my opinion — you can configure which projects to scan but you cannot control when it scans (1–2 x per day). It highlights the CIS and NIST benchmark violations of every project, and resource, with step-by-step remediation.

Note: new pricing will split CSCC in two tiers (free, premium)

- A great resource/checklist is the CIS Benchmarks for Google Cloud — there are specific ones for Kubernetes and other systems too and all free

There are other great third-party tools like Forseti Security and Palo Alto Networks’ Prisma Cloud for scanning and helping optimize your configurations and code deployments (formerly Twistlock and Redlock tools).

Document And Test Your Disaster Recovery & Business Continuity Plans

It goes without saying, however, that you must have an automated data backup and recovery plan for all of your data whether in buckets or databases. You should practice the backup and restore process at least once per year, but preferably even more frequently.

For business continuity and peace of mind, it’s highly recommended to define your infrastructure as code (IaC) using Terraform. If your team is disciplined in maintaining the state of your infrastructure as code, you can easily produce audit reports for compliance and recover from catastrophic failure.

Partner With Experts Who Have Done It Before

I’ve only scratched the surface of key considerations in enterprise cloud transformations, and it may seem overwhelming, but the best thing to do is just start. I may be biased but the second best thing you can do to increase your chances of success is to team up with professionals who have done it plenty of times before.

DoiT International has helped thousands of companies in their cloud journeys with architecture reviews, expert support, and custom cloud budgeting and reporting tools— all at no additional cost to our customers (we are a cloud reseller partner). Our goal is to skill up and empower your team to successfully manage your cloud infrastructure but when needed, we’re always here for you.

Want more stories from Mike? Check our blog, or follow Mike on Twitter.