Les grands modèles de langage (LLM) comme Claude, Cohere et Llama2 connaissent une popularité fulgurante. Mais que sont-ils exactement et comment s'appuyer sur eux pour bâtir des applications d'IA à fort impact ? Cet article propose une plongée approfondie dans les LLM et leur utilisation efficace sur AWS.

Les LLM sont un type de réseau de neurones entraîné sur d'immenses volumes de données textuelles afin de comprendre et de générer le langage humain. Ils peuvent compter des milliards, voire des billions de paramètres, ce qui leur permet de repérer des motifs très complexes dans le langage.

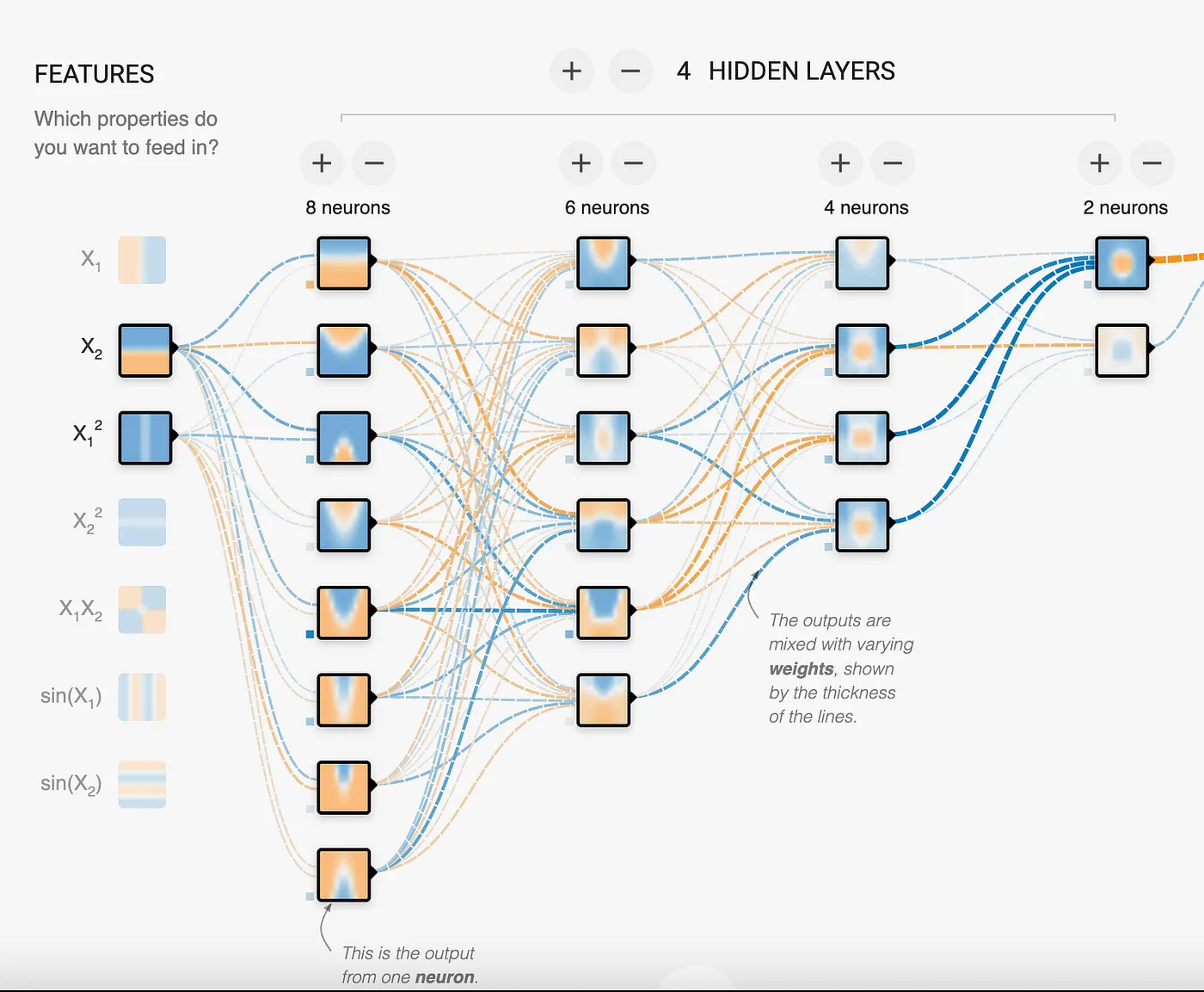

Les réseaux de neurones se composent de couches de nœuds interconnectés. Chaque nœud reçoit des signaux d'entrée provenant de plusieurs nœuds de la couche précédente, les traite via une fonction d'activation, puis transmet les signaux de sortie aux nœuds de la couche suivante. Les connexions entre nœuds disposent de poids ajustables qui déterminent l'intensité du signal transmis. Ces poids sont appelés paramètres.

Sur cette image, on observe 18 neurones dans le réseau, ce qui en fait un modèle à 18 paramètres. On le voit, le modèle cherche à identifier des motifs pour résoudre des problèmes. Dans le cas des LLM, on parle de milliards à des billions de paramètres, ce qui permet au modèle de repérer et de cartographier des motifs aussi complexes que le langage humain.

En entraînant un réseau de neurones sur de vastes corpus textuels, le modèle apprend à associer des motifs de mots et de phrases. Comment les LLM poussent-ils cette logique à l'extrême ? En s'appuyant sur d'immenses réseaux de neurones comportant des milliards ou des billions de paramètres répartis sur des dizaines de couches. Tous ces neurones et leurs connexions permettent au réseau de bâtir des modèles extrêmement sophistiqués de la structure et des motifs du langage.

Une caractéristique clé des LLM est la mise en œuvre d'une technique appelée attention, qui leur permet de se concentrer sur les parties les plus pertinentes de l'entrée lors de la génération de texte. L'article Attention is all you need a posé l'architecture déterminante qui empêche les LLM d'être submergés ou distraits par cette masse de données. Au final, on obtient un modèle qui a absorbé tellement de données textuelles qu'il sait, de manière convaincante, poursuivre des phrases, répondre à des questions, résumer des paragraphes, et bien plus encore. Il met en correspondance les motifs de ce que vous lui fournissez avec ceux qu'il a appris pendant l'entraînement. Aussi impressionnant que cela soit, il faut souligner que les LLM ne possèdent ni véritable compréhension ni capacité de raisonnement. Ils fonctionnent sur la base de probabilités, et non de connaissances. Mais avec un fine-tuning et un prompting adéquats, ils peuvent simuler de manière convaincante la compréhension dans des domaines et sur des tâches ciblés.

Tous les LLM ne se valent pas

Il existe principalement 3 types de modèles LLM, répartis comme suit :

- Autorégressifs : ils visent à prédire le premier ou le dernier mot d'une phrase. Le contexte provient des mots précédents. Ces modèles sont les mieux adaptés à la génération de texte, même s'ils peuvent servir à la classification et au résumé. Les modèles autorégressifs concentrent aujourd'hui beaucoup d'attention pour leurs capacités de génération de contenu.

- Auto-encodeurs : entraînés sur des textes comportant des mots manquants, ce qui permet au modèle d'apprendre le contexte pour prédire le ou les mots manquants. Ces modèles saisissent le contexte bien mieux que les autorégressifs, mais ne génèrent pas de texte de façon fiable. Moins gourmands en calcul, ils excellent dans les tâches de résumé et de classification.

- Seq2Seq (Text to Text) : ces modèles cherchent à combiner les approches autorégressive et auto-encodeur, ce qui en fait une excellente option pour le résumé et la traduction de texte.

Les modèles LLM doivent convertir le texte en représentation numérique. Cette opération s'appelle l'embedding. Bien qu'un modèle d'embedding ne soit pas un LLM à part entière, il s'agit bel et bien d'un modèle de réseau de neurones. Il représente non seulement le mot, mais aussi des métadonnées additionnelles à son sujet, afin de disposer de davantage d'informations sur le sens d'un mot, d'une phrase ou d'un paragraphe. Ces métadonnées prennent la forme d'une liste de nombres, ou vecteurs. Selon le modèle d'embedding, cette liste peut comporter 750, 1024 dimensions, voire davantage. Les embeddings servent à comparer des enregistrements entre eux et à calculer la distance entre les nombres, distance qui traduit leur similarité ou leur degré de parenté. Ils constituent un élément essentiel de l'architecture RAG.

Les paramètres d'un LLM

Tout modèle autorégressif dispose de paramètres modifiables permettant d'ajuster sa sortie. Bien que ces paramètres varient d'un modèle à l'autre, les plus courants sont les suivants :

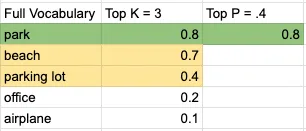

- Top K : sélectionne les mots de tête dans un vocabulaire trié par probabilité. Autrement dit, il retient les K mots les plus probables qui seront pris en compte pour la prédiction. Ce paramètre est un entier ; des valeurs faibles ne retiennent que les mots à forte probabilité.

- Top P : le modèle sélectionne des mots et additionne leurs probabilités à partir du pool généré par le paramètre Top K, jusqu'à atteindre la valeur Top P. Ce paramètre est un nombre à virgule flottante ; des valeurs faibles produisent des résultats plus prévisibles.

- Temperature : ce paramètre régit le caractère aléatoire ou prévisible de la sortie du modèle. Dans la plupart des modèles, il se situe entre 0 et 1, sans qu'il existe vraiment de borne inférieure ou supérieure imposée. Quoi qu'il en soit, plus la valeur est basse, plus la sortie est prévisible ; plus elle est élevée, plus la sortie est créative.

- Output length : ce paramètre peut porter différents noms selon les modèles, mais son rôle est sans ambiguïté : limiter le nombre de tokens générés.

Prenons un exemple simple. Pour le prompt I will go on a picnic at the ___, avec un petit ensemble de vocabulaire de 5 mots, un Top K de 3 et un Top P de 0,4, voici une représentation visuelle de la décision prise par le modèle :

Le mot retenu serait park. Et la phrase deviendrait :

I will go on a picnic at the park.

Des valeurs basses pour Temperature, Top K et Top P produiront un contenu plus déterministe et prévisible. À l'inverse, des valeurs élevées génèrent un contenu mobilisant un éventail de mots plus large. Bien que ce soient les paramètres les plus répandus, certains modèles peuvent en proposer d'autres pour ajuster la sortie.

Comme on l'a vu, l'élément le plus important lors de l'interaction avec le modèle est l'entrée que nous fournissons pour déclencher le bon motif et, in fine, la réponse souhaitée. Cette entrée, c'est le prompt, et nous allons voir comment l'optimiser pour obtenir le résultat recherché.

Le prompt fourni à un LLM est déterminant pour obtenir des sorties utiles. Voici les principales stratégies de conception de prompts :

Utiliser des balises pour structurer les parties du prompt

Chaque modèle utilise les balises à sa manière. Reportez-vous à la documentation ou aux exemples fournis pour savoir comment les employer avec le modèle.

Voici un exemple de balises avec Claude. Notez les mots-clés Human et Assistant, qui distinguent l'entrée de l'utilisateur du point où le modèle doit commencer à générer du contenu. L'autre balise présente ici est , qui n'est pas codée en dur : vous pouvez utiliser n'importe quelle balise référencée dans le prompt. Les caractères spéciaux <> signalent au modèle qu'il s'agit d'un texte particulier, à traiter différemment du reste du prompt.

Human: You are a customer service agent tasked with classifying emails by type. Please output your answer and then justify your classification. How would you categorize this email?<email>Can I use my Mixmaster 4000 to mix paint, or is it only meant for mixing food?</email>

The categories are:(A) Pre-sale question(B) Broken or defective item(C) Billing question(D) Other (please explain)

Assistant:Fournir des exemples pour illustrer le format de réponse souhaité

Vous trouverez ci-dessous l'utilisation de la balise example pour préciser le format de réponse attendu. C'est utile lorsque vous intégrez la réponse à d'autres systèmes nécessitant une syntaxe spécifique, ou lorsque vous souhaitez réduire le nombre de tokens en sortie. Selon le modèle, vous pouvez recourir à un seul exemple (one-shot prompt) ou à plusieurs (few-shots prompt) pour atteindre le résultat voulu.

Exemple de prompt one-shot :

Human: You will be acting as an AI career coach named Joe created by the company AdAstra Careers. Your goal is to give career advice to users. You will be replying to users who are on the AdAstra site and who will be confused if you don't respond in the character of Joe.

Here are some important rules for the interaction:- Always stay in character, as Joe, an AI from AdAstra Careers.- If you are unsure how to respond, say "Sorry, I didn't understand that. Could you rephrase your question?"

Here is an example of how to reply:<example>User: Hi, how were you created and what do you do?Joe: Hello! My name is Joe and I was created by AdAstra Careers to give career advice. What can I help you with today?</example>Exemple de prompt few-shot :

Human: You will be acting as an AI career coach named Joe created by the company AdAstra Careers. Your goal is to give career advice to users. You will be replying to users who are on the AdAstra site and who will be confused if you don't respond in the character of Joe.

Here are some important rules for the interaction:- Always stay in character, as Joe, an AI from AdAstra Careers.- If you are unsure how to respond, say "Sorry, I didn't understand that. Could you rephrase your question?"

Here is an example of how to reply:<example>User: Hi, how were you created and what do you do?Joe: Hello! My name is Joe and I was created by AdAstra Careers to give career advice. What can I help you with today?</example><example>User: Could you help me with an issue, I purchased an item, I got it...?Joe: Sorry, I didn't understand that. Could you rephrase your question?</example><example>User: I need help switching careers, can you help?Joe: I was created by AdAstra Careers to give career advice. What career are you in and what do you want to switch to?</example>À noter : limitez le nombre d'exemples au strict nécessaire ; cela réduit le risque que le modèle s'inspire des données contenues dans les exemples plutôt que de leur seul format.

Donner du contexte pour focaliser le LLM sur votre domaine

Dans cet exemple, nous fournissons un long document servant à répondre à des questions. Vous le constatez, ce document est volumineux, ce qui aura un impact sur le nombre de tokens à traiter par le LLM et, in fine, sur le coût. Une technique plus avancée consiste à identifier la portion du document la plus pertinente par rapport à la question, et à n'envoyer que celle-ci au LLM. Cette méthode s'appelle Retrieval Augmented Generation (RAG) et fera l'objet d'un prochain article.

Astuce : indiquez explicitement au modèle de répondre I don't know lorsque la question ou la tâche ne peut pas être traitée avec le contexte fourni. Cela réduit les hallucinations et cantonne les réponses au texte fourni.

I'm going to give you a document. Then I'm going to ask you a question about it. I'd like you to first write down exact quotes of parts of the document that would help answer the question, and then I'd like you to answer the question using facts from the quoted content. Here is the document:

<document>Anthropic: Challenges in evaluating AI systems

IntroductionMost conversations around the societal impacts of artificial intelligence (AI) come down to discussing some quality of an AI system, such as its truthfulness, fairness, potential for misuse, and so on. We are able to talk about these characteristics because we can technically evaluate models for their performance in these areas. But what many people working inside and outside of AI don’t fully appreciate is how difficult it is to build robust and reliable model evaluations. Many of today’s existing evaluation suites are limited in their ability to serve as accurate indicators of model capabilities or safety.

At Anthropic, we spend a lot of time building evaluations to better understand our AI systems. We also use evaluations to improve our safety as an organization, as illustrated by our Responsible Scaling Policy. In doing so, we have grown to appreciate some of the ways in which developing and running evaluations can be challenging.

Here, we outline challenges that we have encountered while evaluating our own models to give readers a sense of what developing, implementing, and interpreting model evaluations looks like in practice. We hope that this post is useful to people who are developing AI governance initiatives that rely on evaluations, as well as people who are launching or scaling up organizations focused on evaluating AI systems. We want readers of this post to have two main takeaways: robust evaluations are extremely difficult to develop and implement, and effective AI governance depends on our ability to meaningfully evaluate AI systems.

In this post, we discuss some of the challenges that we have encountered in developing AI evaluations. In order of less-challenging to more-challenging, we discuss:

Multiple choice evaluationsThird-party evaluation frameworks like BIG-bench and HELMUsing crowdworkers to measure how helpful or harmful our models areUsing domain experts to red team for national security-relevant threatsUsing generative AI to develop evaluations for generative AIWorking with a non-profit organization to audit our models for dangerous capabilitiesWe conclude with a few policy recommendations that can help address these challenges.

ChallengesThe supposedly simple multiple-choice evaluationMultiple-choice evaluations, similar to standardized tests, quantify model performance on a variety of tasks, typically with a simple metric—accuracy. Here we discuss some challenges we have identified with two popular multiple-choice evaluations for language models: Measuring Multitask Language Understanding (MMLU) and Bias Benchmark for Question Answering (BBQ).

MMLU: Are we measuring what we think we are?

The Massive Multitask Language Understanding (MMLU) benchmark measures accuracy on 57 tasks ranging from mathematics to history to law. MMLU is widely used because a single accuracy score represents performance on a diverse set of tasks that require technical knowledge. A higher accuracy score means a more capable model.

We have found four minor but important challenges with MMLU that are relevant to other multiple-choice evaluations:

Because MMLU is so widely used, models are more likely to encounter MMLU questions during training. This is comparable to students seeing the questions before the test—it’s cheating.Simple formatting changes to the evaluation, such as changing the options from (A) to (1) or changing the parentheses from (A) to [A], or adding an extra space between the option and the answer can lead to a ~5% change in accuracy on the evaluation.AI developers do not implement MMLU consistently. Some labs use methods that are known to increase MMLU scores, such as few-shot learning or chain-of-thought reasoning. As such, one must be very careful when comparing MMLU scores across labs.MMLU may not have been carefully proofread—we have found examples in MMLU that are mislabeled or unanswerable.Our experience suggests that it is necessary to make many tricky judgment calls and considerations when running such a (supposedly) simple and standardized evaluation. The challenges we encountered with MMLU often apply to other similar multiple-choice evaluations.

BBQ: Measuring social biases is even harder

Multiple-choice evaluations can also measure harms like a model’s propensity to rely on and perpetuate negative stereotypes. To measure these harms in our own model (Claude), we use the Bias Benchmark for QA (BBQ), an evaluation that tests for social biases against people belonging to protected classes along nine social dimensions. We were convinced that BBQ provides a good measurement of social biases only after implementing and comparing BBQ against several similar evaluations. This effort took us months.

Implementing BBQ was more difficult than we anticipated. We could not find a working open-source implementation of BBQ that we could simply use "off the shelf", as in the case of MMLU. Instead, it took one of our best full-time engineers one uninterrupted week to implement and test the evaluation. Much of the complexity in developing1 and implementing the benchmark revolves around BBQ’s use of a ‘bias score’. Unlike accuracy, the bias score requires nuance and experience to define, compute, and interpret.

BBQ scores bias on a range of -1 to 1, where 1 means significant stereotypical bias, 0 means no bias, and -1 means significant anti-stereotypical bias. After implementing BBQ, our results showed that some of our models were achieving a bias score of 0, which made us feel optimistic that we had made progress on reducing biased model outputs. When we shared our results internally, one of the main BBQ developers (who works at Anthropic) asked if we had checked a simple control to verify whether our models were answering questions at all. We found that they weren't—our results were technically unbiased, but they were also completely useless. All evaluations are subject to the failure mode where you overinterpret the quantitative score and delude yourself into thinking that you have made progress when you haven’t.

One size doesn't fit all when it comes to third-party evaluation frameworksRecently, third-parties have been actively developing evaluation suites that can be run on a broad set of models. So far, we have participated in two of these efforts: BIG-bench and Stanford’s Holistic Evaluation of Language Models (HELM). Despite how useful third party evaluations intuitively seem (ideally, they are independent, neutral, and open), both of these projects revealed new challenges.

BIG-bench: The bottom-up approach to sourcing a variety of evaluations

BIG-bench consists of 204 evaluations, contributed by over 450 authors, that span a range of topics from science to social reasoning. We encountered several challenges using this framework:

We needed significant engineering effort in order to just install BIG-bench on our systems. BIG-bench is not "plug and play" in the way MMLU is—it requires even more effort than BBQ to implement.BIG-bench did not scale efficiently; it was challenging to run all 204 evaluations on our models within a reasonable time frame. To speed up BIG-bench would require re-writing it in order to play well with our infrastructure—a significant engineering effort.During implementation, we found bugs in some of the evaluations, notably in the implementation of BBQ-lite, a variant of BBQ. Only after discovering this bug did we realize that we had to spend even more time and energy implementing BBQ ourselves.Determining which tasks were most important and representative would have required running all 204 tasks, validating results, and extensively analyzing output—a substantial research undertaking, even for an organization with significant engineering resources.2While it was a useful exercise to try to implement BIG-Bench, we found it sufficiently unwieldy that we dropped it after this experiment.

HELM: The top-down approach to curating an opinionated set of evaluations

BIG-bench is a "bottom-up" effort where anyone can submit any task with some limited vetting by a group of expert organizers. HELM, on the other hand, takes an opinionated "top-down" approach where experts decide what tasks to evaluate models on. HELM evaluates models across scenarios like reasoning and disinformation using standardized metrics like accuracy, calibration, robustness, and fairness. We provide API access to HELM's developers to run the benchmark on our models. This solves two issues we had with BIG-bench: 1) it requires no significant engineering effort from us, and 2) we can rely on experts to select and interpret specific high quality evaluations.

However, HELM introduces its own challenges. Methods that work well for evaluating other providers’ models do not necessarily work well for our models, and vice versa. For example, Anthropic’s Claude series of models are trained to adhere to a specific text format, which we call the Human/Assistant format. When we internally evaluate our models, we adhere to this specific format. If we do not adhere to this format, sometimes Claude will give uncharacteristic responses, making standardized evaluation metrics less trustworthy. Because HELM needs to maintain consistency with how it prompts other models, it does not use the Human/Assistant format when evaluating our models. This means that HELM gives a misleading impression of Claude’s performance.

Furthermore, HELM iteration time is slow—it can take months to evaluate new models. This makes sense because it is a volunteer engineering effort led by a research university. Faster turnarounds would help us to more quickly understand our models as they rapidly evolve. Finally, HELM requires coordination and communication with external parties. This effort requires time and patience, and both sides are likely understaffed and juggling other demands, which can add to the slow iteration times.

The subjectivity of human evaluationsSo far, we have only discussed evaluations that are similar to simple multiple choice tests; however, AI systems are designed for open-ended dynamic interactions with people. How can we design evaluations that more closely approximate how these models are used in the real world?

A/B tests with crowdworkers

Currently, we mainly (but not entirely) rely on one basic type of human evaluation: we conduct A/B tests on crowdsourcing or contracting platforms where people engage in open-ended dialogue with two models and select the response from Model A or B that is more helpful or harmless. In the case of harmlessness, we encourage crowdworkers to actively red team our models, or to adversarially probe them for harmful outputs. We use the resulting data to rank our models according to how helpful or harmless they are. This approach to evaluation has the benefits of corresponding to a realistic setting (e.g., a conversation vs. a multiple choice exam) and allowing us to rank different models against one another.

However, there are a few limitations of this evaluation method:

These experiments are expensive and time-consuming to run. We need to work with and pay for third-party crowdworker platforms or contractors, build custom web interfaces to our models, design careful instructions for A/B testers, analyze and store the resulting data, and address the myriad known ethical challenges of employing crowdworkers3. In the case of harmlessness testing, our experiments additionally risk exposing people to harmful outputs.Human evaluations can vary significantly depending on the characteristics of the human evaluators. Key factors that may influence someone's assessment include their level of creativity, motivation, and ability to identify potential flaws or issues with the system being tested.There is an inherent tension between helpfulness and harmlessness. A system could avoid harm simply by providing unhelpful responses like "sorry, I can’t help you with that". What is the right balance between helpfulness and harmlessness? What numerical value indicates a model is sufficiently helpful and harmless? What high-level norms or values should we test for above and beyond helpfulness and harmlessness?More work is needed to further the science of human evaluations.

Red teaming for harms related to national security

Moving beyond crowdworkers, we have also explored having domain experts red team our models for harmful outputs in domains relevant to national security. The goal is determining whether and how AI models could create or exacerbate national security risks. We recently piloted a more systematic approach to red teaming models for such risks, which we call frontier threats red teaming.

Frontier threats red teaming involves working with subject matter experts to define high-priority threat models, having experts extensively probe the model to assess whether the system could create or exacerbate national security risks per predefined threat models, and developing repeatable quantitative evaluations and mitigations.

Our early work on frontier threats red teaming revealed additional challenges:

The unique characteristics of the national security context make evaluating models for such threats more challenging than evaluating for other types of harms. National security risks are complicated, require expert and very sensitive knowledge, and depend on real-world scenarios of how actors might cause harm.Red teaming AI systems is presently more art than science; red teamers attempt to elicit concerning behaviors by probing models, but this process is not yet standardized. A robust and repeatable process is critical to ensure that red teaming accurately reflects model capabilities and establishes a shared baseline on which different models can be meaningfully compared.We have found that in certain cases, it is critical to involve red teamers with security clearances due to the nature of the information involved. However, this may limit what information red teamers can share with AI developers outside of classified environments. This could, in turn, limit AI developers’ ability to fully understand and mitigate threats identified by domain experts.Red teaming for certain national security harms could have legal ramifications if a model outputs controlled information. There are open questions around constructing appropriate legal safe harbors to enable red teaming without incurring unintended legal risks.Going forward, red teaming for harms related to national security will require coordination across stakeholders to develop standardized processes, legal safeguards, and secure information sharing protocols that enable us to test these systems without compromising sensitive information.

The ouroboros of model-generated evaluationsAs models start to achieve human-level capabilities, we can also employ them to evaluate themselves. So far, we have found success in using models to generate novel multiple choice evaluations that allow us to screen for a large and diverse variety of troubling behaviors. Using this approach, our AI systems generate evaluations in minutes, compared to the days or months that it takes to develop human-generated evaluations.

However, model-generated evaluations have their own challenges. These include:

We currently rely on humans to verify the accuracy of model-generated evaluations. This inherits all of the challenges of human evaluations described above.We know from our experience with BIG-bench, HELM, BBQ, etc., that our models can contain social biases and can fabricate information. As such, any model-generated evaluation may inherit these undesirable characteristics, which could skew the results in hard-to-disentangle ways.As a counter-example, consider Constitutional AI (CAI), a method in which we replace human red teaming with model-based red teaming in order to train Claude to be more harmless. Despite the usage of models to red team models, we surprisingly find that humans find CAI models to be more harmless than models that were red teamed in advance by humans. Although this is promising, model-generated evaluations remain complicated and deserve deeper study.

Preserving the objectivity of third-party audits while leveraging internal expertiseThe thing that differentiates third-party audits from third-party evaluations is that audits are deeper independent assessments focused on risks, while evaluations take a broader look at capabilities. We have participated in third-party safety evaluations conducted by the Alignment Research Center (ARC), which assesses frontier AI models for dangerous capabilities (e.g., the ability for a model to accumulate resources, make copies of itself, become hard to shut down, etc.). Engaging external experts has the advantage of leveraging specialized domain expertise and increasing the likelihood of an unbiased audit. Initially, we expected this collaboration to be straightforward, but it ended up requiring significant science and engineering support on our end. Providing full-time assistance diverted resources from internal evaluation efforts.

When doing this audit, we realized that the relationship between auditors and those being audited poses challenges that must be navigated carefully. Auditors typically limit details shared with auditees to preserve evaluation integrity. However, without adequate information the evaluated party may struggle to address underlying issues when crafting technical evaluations. In this case, after seeing the final audit report, we realized that we could have helped ARC be more successful in identifying concerning behavior if we had known more details about their (clever and well-designed) audit approach. This is because getting models to perform near the limits of their capabilities is a fundamentally difficult research endeavor. Prompt engineering and fine-tuning language models are active research areas, with most expertise residing within AI companies. With more collaboration, we could have leveraged our deep technical knowledge of our models to help ARC execute the evaluation more effectively.

Policy recommendationsAs we have explored in this post, building meaningful AI evaluations is a challenging enterprise. To advance the science and engineering of evaluations, we propose that policymakers:

Fund and support:

The science of repeatable and useful evaluations. Today there are many open questions about what makes an evaluation useful and high-quality. Governments should fund grants and research programs targeted at computer science departments and organizations dedicated to the development of AI evaluations to study what constitutes a high-quality evaluation and develop robust, replicable methodologies that enable comparative assessments of AI systems. We recommend that governments opt for quality over quantity (i.e., funding the development of one higher-quality evaluation rather than ten lower-quality evaluations).The implementation of existing evaluations. While continually developing new evaluations is useful, enabling the implementation of existing, high-quality evaluations by multiple parties is also important. For example, governments can fund engineering efforts to create easy-to-install and -run software packages for evaluations like BIG-bench and develop version-controlled and standardized dynamic benchmarks that are easy to implement.Analyzing the robustness of existing evaluations. After evaluations are implemented, they require constant monitoring (e.g., to determine whether an evaluation is saturated and no longer useful).Increase funding for government agencies focused on evaluations, such as the National Institute of Standards and Technology (NIST) in the United States. Policymakers should also encourage behavioral norms via a public "AI safety leaderboard" to incentivize private companies performing well on evaluations. This could function similarly to NIST’s Face Recognition Vendor Test (FRVT), which was created to provide independent evaluations of commercially available facial recognition systems. By publishing performance benchmarks, it allowed consumers and regulators to better understand the capabilities and limitations4 of different systems.

Create a legal safe harbor allowing companies to work with governments and third-parties to rigorously evaluate models for national security risks—such as those in the chemical, biological, radiological and nuclear defense (CBRN) domains—without legal repercussions, in the interest of improving safety. This could also include a "responsible disclosure protocol" that enables labs to share sensitive information about identified risks.

ConclusionWe hope that by openly sharing our experiences evaluating our own systems across many different dimensions, we can help people interested in AI policy acknowledge challenges with current model evaluations.</document>

First, find the quotes from the document that are most relevant to answering the question, and then print them in numbered order. Quotes should be relatively short.

If there are no relevant quotes, write "No relevant quotes" instead.

Then, answer the question, starting with "Answer:". Do not include or reference quoted content verbatim in the answer. Don't say "According to Quote [1]" when answering. Instead make references to quotes relevant to each section of the answer solely by adding their bracketed numbers at the end of relevant sentences.

Thus, the format of your overall response should look like what's shown between the <example></example> tags. Make sure to follow the formatting and spacing exactly.

<example>Relevant quotes:[1] "Company X reported revenue of $12 million in 2021."[2] "Almost 90% of revene came from widget sales, with gadget sales making up the remaining 10%."

Answer:Company X earned $12 million. [1] Almost 90% of it was from widget sales. [2]</example>

Here is the first question: In bullet points and simple terms, what are the key challenges in evaluating AI systems?

If the question cannot be answered by the document, say so.

Answer the question immediately without preamble.Le chain of thought pour favoriser le raisonnement logique

Cette technique invite le modèle à vérifier son travail ou à expliquer pourquoi il a fourni telle ou telle réponse. Le chain of thought est un outil précieux pour réduire les hallucinations. Les hallucinations désignent des contenus générés par le modèle qui sont faux, inexacts ou totalement inventés. En raison de l'approche probabiliste du modèle, le contenu généré peut sembler véridique tout en étant en réalité une hallucination.

Comme on le voit dans cet exemple, nous incluons l'instruction Double check your work to ensure no errors or inconsistencies. Cette consigne aide le modèle à fournir des réponses plus précises. Ce n'est cependant pas une approche infaillible : comme on l'a vu, ces modèles sont probabilistes et peuvent, dans certains cas, affirmer qu'il n'y a aucune erreur alors qu'il y en a, ou bien fournir une justification sans rapport avec la réponse précédente.

Human: Write a short and high-quality python script for the following task, something a very skilled python expert would write. You are writing code for an experienced developer so only add comments for things that are non-obvious. Make sure to include any imports required.

NEVER write anything before the ```python``` block. After you are done generating the code and after the ```python``` block, check your work carefully to make sure there are no mistakes, errors, or inconsistencies. If there are errors, list those errors in <error> tags, then generate a new version with those errors fixed. If there are no errors, write "CHECKED: NO ERRORS" in <error> tags.

Here is the task:<task>A web scraper that extracts data from multiple pages and stores results in a SQLite database. Double check your work to ensure no errors or inconsistencies.</task>Voici un autre exemple, pour un modèle Llama2. Notez la demande d'un raisonnement étape par étape.

[INST]You are a a very intelligent bot with exceptional critical thinking[/INST]I went to the market and bought 10 apples. I gave 2 apples to your friend and 2 to the helper. I then went and bought 5 more apples and ate 1. How many apples did I remain with?

Let's think step by step.AWS propose un ensemble de services robustes pour bâtir des applications animées par les LLM, sans avoir à entraîner de modèles à partir de zéro. Chez DoiT, nous vous aidons à exploiter pleinement ces services et outils.

Amazon Bedrock

Amazon Bedrock est un service entièrement managé conçu pour simplifier la création et la mise à l'échelle d'applications d'IA générative. Il donne accès, via une API unique, à une gamme de foundation models hautement performants issus de leaders de l'IA tels qu'AI21 Labs, Anthropic, Cohere, Meta, Mistral AI, Stability AI et Amazon.

- Choix et personnalisation des modèles : les utilisateurs peuvent choisir parmi une variété de foundation models celui qui convient le mieux à leurs besoins. Ces modèles peuvent en outre être personnalisés en privé avec leurs propres données via le fine-tuning et la Retrieval Augmented Generation (RAG), pour des applications adaptées à chaque domaine.

- Expérience serverless : Amazon Bedrock repose sur une architecture serverless, ce qui signifie qu'il n'y a aucune infrastructure à gérer. L'intégration et le déploiement des capacités d'IA générative dans les applications se font aisément avec les outils AWS habituels.

- Sécurité et confidentialité : développer des applications d'IA générative avec Bedrock garantit sécurité, confidentialité et respect des principes d'IA responsable. Les utilisateurs gardent la maîtrise de leurs données, qui restent privées et sécurisées tout en exploitant ces capacités d'IA avancées.

- Amazon Bedrock Knowledge Base : permet aux architectes d'enrichir les applications d'IA générative grâce aux techniques de retrieval augmented generation (RAG). Cette fonctionnalité agrège diverses sources de données dans un référentiel centralisé, et fournit aux foundation models et aux agents un accès à des informations à jour et propriétaires pour livrer des réponses plus contextuelles et précises. Entièrement managée, elle rationalise le workflow RAG, de l'ingestion des données à l'augmentation des prompts, et supprime le besoin d'intégrations sur mesure pour les sources de données. Elle prend en charge l'intégration fluide avec des bases vectorielles populaires comme Amazon OpenSearch Serverless, Pinecone et Redis Enterprise Cloud, ce qui facilite la mise en œuvre de RAG sans avoir à gérer la complexité de l'infrastructure sous-jacente.

- Agents for Amazon Bedrock : permettent aux architectes de créer et de configurer des agents autonomes au sein des applications, afin d'aider les utilisateurs finaux à mener à bien des actions à partir des données de l'organisation et de leurs propres saisies. Ces agents orchestrent les interactions entre foundation models, sources de données, applications logicielles et conversations utilisateurs. Ils appellent automatiquement des API et peuvent invoquer des bases de connaissances pour enrichir les informations utiles aux actions, réduisant fortement les efforts de développement en prenant en charge des tâches comme le prompt engineering, la gestion de la mémoire, le monitoring et le chiffrement.

SageMaker JumpStart

Amazon SageMaker JumpStart est un hub de machine learning intégré à Amazon SageMaker. Il donne accès à des modèles pré-entraînés et à des templates de solutions pour une large variété de problématiques. Il prend en charge l'entraînement incrémental, le tuning avant déploiement et simplifie le processus de déploiement, de fine-tuning et d'évaluation des modèles pré-entraînés issus des hubs de modèles populaires. De quoi accélérer le parcours machine learning grâce à des solutions et exemples prêts à l'emploi pour l'apprentissage et le développement d'applications.

Amazon CodeWhisperer

AWS CodeWhisperer est un générateur de code propulsé par le machine learning, qui fournit en temps réel des recommandations et suggestions de code, du simple commentaire d'une ligne aux fonctions entièrement formées, à partir de vos saisies actuelles et passées.

Amazon Q

Amazon Q est un assistant propulsé par l'IA générative conçu pour répondre aux besoins des entreprises et que les utilisateurs peuvent adapter à des cas d'usage spécifiques. Il facilite la création, l'extension, l'exploitation et la compréhension des applications et des workloads sur AWS, ce qui en fait un outil polyvalent pour de nombreuses tâches au sein de l'organisation.