Los modelos de lenguaje de gran tamaño (LLM) como Claude, Cohere y Llama2 han ganado una popularidad enorme en el último tiempo. Pero ¿qué son exactamente y cómo puedes aprovecharlos para crear aplicaciones de IA con impacto real? Este artículo es un análisis a fondo de los LLM y de cómo usarlos de forma efectiva en AWS.

Los LLM son un tipo de Red Neuronal entrenada con cantidades masivas de datos de texto para entender y generar lenguaje humano. Pueden contener miles de millones —o incluso billones— de parámetros, lo que les permite detectar patrones muy complejos en el lenguaje.

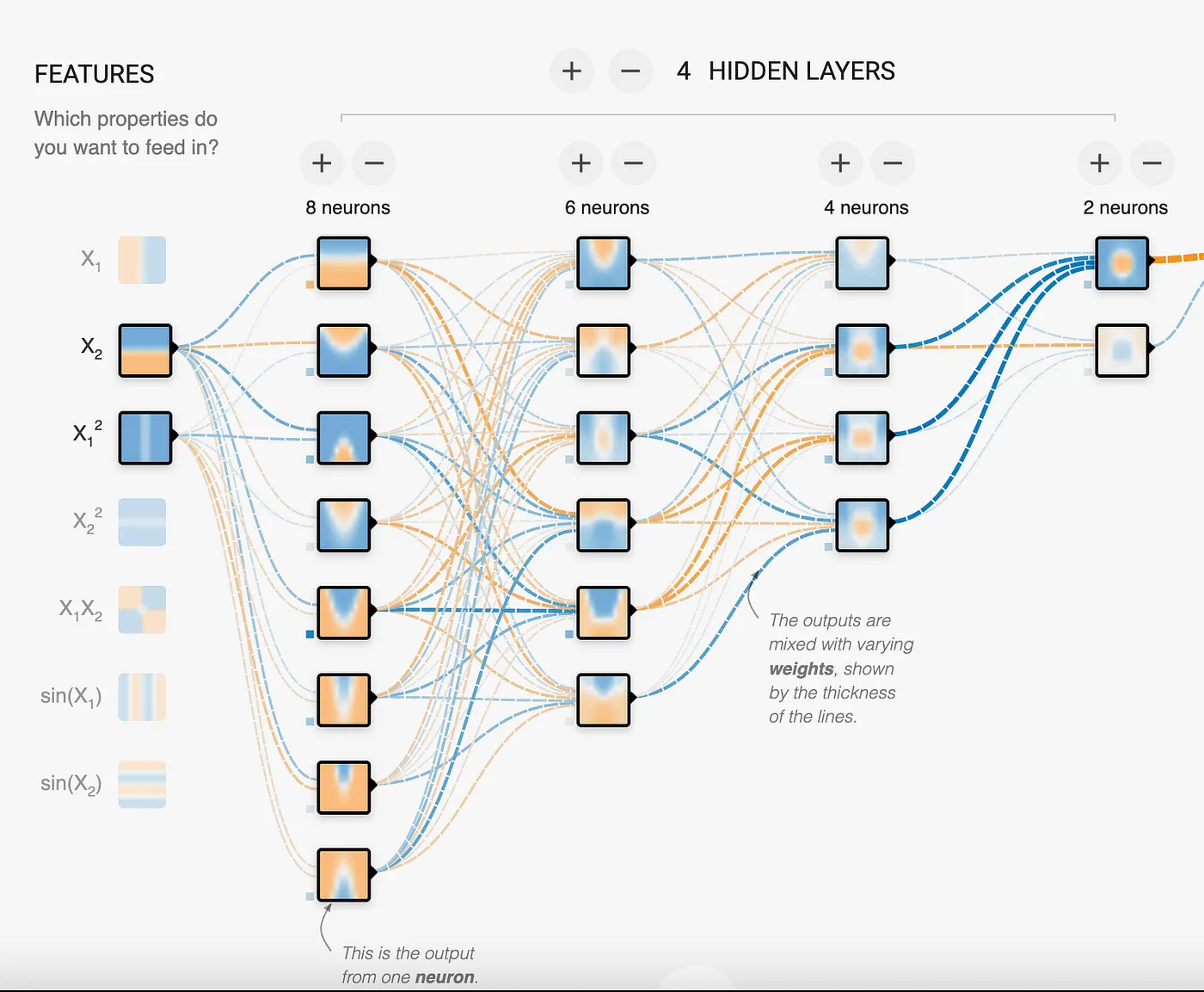

Las Redes Neuronales se componen de capas de nodos interconectados. Cada nodo recibe señales de entrada de varios nodos de la capa anterior, las procesa mediante una función de activación y luego transmite las señales de salida a los nodos de la siguiente capa. Las conexiones entre nodos tienen pesos ajustables que determinan la intensidad de la señal transmitida. A esos pesos se les llama parámetros.

En esta imagen se ven 18 neuronas en la Red Neuronal, lo que significa que se trata de un modelo de 18 parámetros. Como se aprecia, el modelo intenta encontrar patrones para resolver problemas. En el caso de los LLM hablamos de miles de millones a billones de parámetros, lo que le permite al LLM identificar y mapear patrones tan complejos como el lenguaje humano.

Al entrenar una Red Neuronal con grandes volúmenes de texto, el modelo aprende a reconocer patrones de palabras y oraciones. Entonces, ¿cómo llevan los LLM esto al extremo? Mediante Redes Neuronales enormes, con miles de millones o billones de parámetros apilados en decenas de capas. Todas esas neuronas y sus conexiones le permiten a la red construir modelos sumamente sofisticados de la estructura y los patrones del lenguaje.

Una característica clave de los LLM es la implementación de una técnica llamada atención, que les permite enfocarse en las partes más relevantes de la entrada al generar texto. El paper "Attention is all you need" presentó la arquitectura clave que evitó que los LLM se saturaran y se distrajeran con tantos datos. El resultado es un modelo que ha absorbido tal cantidad de texto que puede continuar oraciones de forma realista, responder preguntas, resumir párrafos y mucho más. Compara los patrones de lo que le entregas con los que aprendió durante el entrenamiento. Por muy impresionante que sea, conviene aclarar que los LLM no tienen comprensión real ni capacidad de razonamiento. Funcionan en base a probabilidades, no a conocimiento. Pero con el fine-tuning y el prompting adecuados, pueden simular comprensión de manera convincente en dominios y tareas acotadas.

No todos los LLM son iguales

Existen principalmente 3 tipos de modelos LLM, que se dividen en:

- Autorregresivos: Apuntan a predecir la primera o la última palabra de una oración. El contexto proviene de las palabras vistas previamente. Son los mejores para generación de texto, aunque también sirven para clasificación y resúmenes. Hoy los modelos autorregresivos están en el centro de la atención por su capacidad de generar contenido.

- Autoencoding: Se entrenan con texto al que le faltan palabras intermedias, lo que le permite al modelo aprender el contexto para predecir las palabras ausentes. Estos modelos entienden el contexto mucho mejor que los autorregresivos, pero no son confiables para generar texto. Demandan menos cómputo y son excelentes para tareas de resumen y clasificación.

- Seq2Seq (Texto a Texto): Estos modelos buscan combinar el enfoque de los modelos autorregresivos y de autoencoding, lo que los convierte en una gran opción para resumir y traducir texto.

Los modelos LLM necesitan convertir el texto en una representación numérica. A esta acción del modelo se le llama embedding. Aunque un modelo de embedding no es propiamente un LLM, sí es un modelo de red neuronal. Es capaz de representar no solo la palabra, sino también metadatos adicionales sobre ella para tener más información sobre lo que significa esa palabra, oración o párrafo. Esos metadatos se representan mediante una lista de números o vectores. Según el modelo de embedding, la lista puede tener un tamaño de 750, 1024 o incluso mayor. Los embeddings sirven para comparar registros entre sí y calcular qué tanta distancia hay entre los números, lo que permite estimar qué tan similares o relacionados están. Los embeddings son una pieza crítica de la arquitectura RAG.

Configuraciones en un LLM

Todo modelo autorregresivo cuenta con ciertos parámetros que se pueden modificar para ajustar su salida. Aunque los parámetros específicos varían de un modelo a otro, los más comunes son:

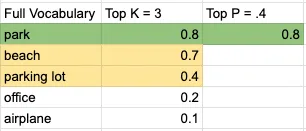

- Top K: Toma las palabras más probables de un vocabulario ordenado por probabilidad. Dicho de otro modo, considera las K palabras más probables del idioma para la predicción. Es un valor entero, y mientras más bajo, solo se consideran palabras de alta probabilidad.

- Top P: El modelo empieza a elegir palabras y a sumar sus probabilidades del grupo generado por Top K hasta alcanzar el valor de Top P. Es un número decimal, y los valores más bajos generan resultados más predecibles.

- Temperature: Controla qué tan aleatoria o predecible es la salida del modelo. En la mayoría de los modelos se ubica entre 0 y 1, aunque no hay un límite inferior o superior real impuesto por el modelo. En cualquier caso, mientras menor sea el número, más predecible será la salida; mientras mayor, más creativa.

- Output length: Puede recibir distintos nombres según el modelo, pero su función es clara: limitar la cantidad de tokens que genera el modelo.

Veamos un ejemplo sencillo. Dado el prompt "I will go on a picnic at the ___", si tomamos un vocabulario reducido de 5 palabras, con Top K en 3 y Top P en .4, lo siguiente es una representación visual de la decisión que tomaría el modelo:

La palabra sería "park". Y la oración quedaría así:

"I will go on a picnic at the park".

Valores bajos en Temperature, Top K y Top P generarán contenido más determinista y predecible. En cambio, valores altos en Temperature, Top K y Top P producen contenido con un rango más amplio de palabras. Aunque estos son los parámetros más populares, un modelo en particular puede tener otros adicionales para manipular la salida.

Como vimos, lo más importante al interactuar con el modelo es la entrada que le entregamos para activar el patrón correcto y, en última instancia, obtener la respuesta deseada. Esa entrada es el prompt, y a continuación veremos cómo optimizarlo para conseguir el resultado que buscamos.

El prompt que le entregas a un LLM es clave para obtener salidas útiles. Algunas estrategias importantes de diseño de prompts son:

Usa etiquetas para separar las partes del prompt

Cada modelo maneja las etiquetas de forma distinta. Apóyate en la documentación o en los ejemplos disponibles para saber cómo se usan en cada uno.

El siguiente es un ejemplo de etiquetas en Claude. Fíjate en las palabras especiales Human y Assistant para diferenciar la entrada del usuario y el punto donde el modelo comienza a generar contenido. La otra etiqueta es ; no está predefinida: puedes usar cualquier etiqueta que se referencie en el prompt. Los caracteres especiales <> le indican al modelo que ese es un texto especial que debe interpretar de manera distinta al resto del prompt.

Human: You are a customer service agent tasked with classifying emails by type. Please output your answer and then justify your classification. How would you categorize this email?<email>Can I use my Mixmaster 4000 to mix paint, or is it only meant for mixing food?</email>

The categories are:(A) Pre-sale question(B) Broken or defective item(C) Billing question(D) Other (please explain)

Assistant:Entrega ejemplos para mostrar el formato de respuesta deseado

A continuación puedes ver el uso de la etiqueta example para indicar el formato en el que queremos que el modelo responda. Resulta útil cuando integras la respuesta con otros sistemas que requieren una sintaxis específica, o cuando quieres reducir la cantidad de tokens de salida. Según el modelo, puedes usar un ejemplo (one-shot prompt) o varios (few-shots prompt) para lograr la salida deseada.

Ejemplo de one-shot prompt:

Human: You will be acting as an AI career coach named Joe created by the company AdAstra Careers. Your goal is to give career advice to users. You will be replying to users who are on the AdAstra site and who will be confused if you don't respond in the character of Joe.

Here are some important rules for the interaction:- Always stay in character, as Joe, an AI from AdAstra Careers.- If you are unsure how to respond, say "Sorry, I didn't understand that. Could you rephrase your question?"

Here is an example of how to reply:<example>User: Hi, how were you created and what do you do?Joe: Hello! My name is Joe and I was created by AdAstra Careers to give career advice. What can I help you with today?</example>Ejemplo de few-shot prompt:

Human: You will be acting as an AI career coach named Joe created by the company AdAstra Careers. Your goal is to give career advice to users. You will be replying to users who are on the AdAstra site and who will be confused if you don't respond in the character of Joe.

Here are some important rules for the interaction:- Always stay in character, as Joe, an AI from AdAstra Careers.- If you are unsure how to respond, say "Sorry, I didn't understand that. Could you rephrase your question?"

Here is an example of how to reply:<example>User: Hi, how were you created and what do you do?Joe: Hello! My name is Joe and I was created by AdAstra Careers to give career advice. What can I help you with today?</example><example>User: Could you help me with an issue, I purchased an item, I got it...?Joe: Sorry, I didn't understand that. Could you rephrase your question?</example><example>User: I need help switching careers, can you help?Joe: I was created by AdAstra Careers to give career advice. What career are you in and what do you want to switch to?</example>Nota: Mantén la cantidad de ejemplos al mínimo necesario; esto reduce la posibilidad de que el modelo tome datos del ejemplo en lugar de imitar solo su formato.

Da contexto para enfocar al LLM en tu dominio específico

En este ejemplo entregamos un documento extenso. Ese documento se usa para responder preguntas. Como puedes ver, es bastante largo, lo que impactará en el número de tokens que el LLM debe procesar y, por ende, en el costo. Una técnica más avanzada consiste en identificar la parte del documento más relevante para la pregunta y enviar solo esa porción al LLM. Este método se llama Retrieval Augmented Generation (RAG) y lo abordaremos en un próximo post.

Tip profesional: Indícale explícitamente al modelo que responda "No lo sé" si la pregunta o tarea no se puede resolver con el contexto entregado. Esto reduce las alucinaciones y mantiene el alcance de las respuestas dentro del texto provisto.

I'm going to give you a document. Then I'm going to ask you a question about it. I'd like you to first write down exact quotes of parts of the document that would help answer the question, and then I'd like you to answer the question using facts from the quoted content. Here is the document:

<document>Anthropic: Challenges in evaluating AI systems

IntroductionMost conversations around the societal impacts of artificial intelligence (AI) come down to discussing some quality of an AI system, such as its truthfulness, fairness, potential for misuse, and so on. We are able to talk about these characteristics because we can technically evaluate models for their performance in these areas. But what many people working inside and outside of AI don’t fully appreciate is how difficult it is to build robust and reliable model evaluations. Many of today’s existing evaluation suites are limited in their ability to serve as accurate indicators of model capabilities or safety.

At Anthropic, we spend a lot of time building evaluations to better understand our AI systems. We also use evaluations to improve our safety as an organization, as illustrated by our Responsible Scaling Policy. In doing so, we have grown to appreciate some of the ways in which developing and running evaluations can be challenging.

Here, we outline challenges that we have encountered while evaluating our own models to give readers a sense of what developing, implementing, and interpreting model evaluations looks like in practice. We hope that this post is useful to people who are developing AI governance initiatives that rely on evaluations, as well as people who are launching or scaling up organizations focused on evaluating AI systems. We want readers of this post to have two main takeaways: robust evaluations are extremely difficult to develop and implement, and effective AI governance depends on our ability to meaningfully evaluate AI systems.

In this post, we discuss some of the challenges that we have encountered in developing AI evaluations. In order of less-challenging to more-challenging, we discuss:

Multiple choice evaluationsThird-party evaluation frameworks like BIG-bench and HELMUsing crowdworkers to measure how helpful or harmful our models areUsing domain experts to red team for national security-relevant threatsUsing generative AI to develop evaluations for generative AIWorking with a non-profit organization to audit our models for dangerous capabilitiesWe conclude with a few policy recommendations that can help address these challenges.

ChallengesThe supposedly simple multiple-choice evaluationMultiple-choice evaluations, similar to standardized tests, quantify model performance on a variety of tasks, typically with a simple metric—accuracy. Here we discuss some challenges we have identified with two popular multiple-choice evaluations for language models: Measuring Multitask Language Understanding (MMLU) and Bias Benchmark for Question Answering (BBQ).

MMLU: Are we measuring what we think we are?

The Massive Multitask Language Understanding (MMLU) benchmark measures accuracy on 57 tasks ranging from mathematics to history to law. MMLU is widely used because a single accuracy score represents performance on a diverse set of tasks that require technical knowledge. A higher accuracy score means a more capable model.

We have found four minor but important challenges with MMLU that are relevant to other multiple-choice evaluations:

Because MMLU is so widely used, models are more likely to encounter MMLU questions during training. This is comparable to students seeing the questions before the test—it’s cheating.Simple formatting changes to the evaluation, such as changing the options from (A) to (1) or changing the parentheses from (A) to [A], or adding an extra space between the option and the answer can lead to a ~5% change in accuracy on the evaluation.AI developers do not implement MMLU consistently. Some labs use methods that are known to increase MMLU scores, such as few-shot learning or chain-of-thought reasoning. As such, one must be very careful when comparing MMLU scores across labs.MMLU may not have been carefully proofread—we have found examples in MMLU that are mislabeled or unanswerable.Our experience suggests that it is necessary to make many tricky judgment calls and considerations when running such a (supposedly) simple and standardized evaluation. The challenges we encountered with MMLU often apply to other similar multiple-choice evaluations.

BBQ: Measuring social biases is even harder

Multiple-choice evaluations can also measure harms like a model’s propensity to rely on and perpetuate negative stereotypes. To measure these harms in our own model (Claude), we use the Bias Benchmark for QA (BBQ), an evaluation that tests for social biases against people belonging to protected classes along nine social dimensions. We were convinced that BBQ provides a good measurement of social biases only after implementing and comparing BBQ against several similar evaluations. This effort took us months.

Implementing BBQ was more difficult than we anticipated. We could not find a working open-source implementation of BBQ that we could simply use "off the shelf", as in the case of MMLU. Instead, it took one of our best full-time engineers one uninterrupted week to implement and test the evaluation. Much of the complexity in developing1 and implementing the benchmark revolves around BBQ’s use of a ‘bias score’. Unlike accuracy, the bias score requires nuance and experience to define, compute, and interpret.

BBQ scores bias on a range of -1 to 1, where 1 means significant stereotypical bias, 0 means no bias, and -1 means significant anti-stereotypical bias. After implementing BBQ, our results showed that some of our models were achieving a bias score of 0, which made us feel optimistic that we had made progress on reducing biased model outputs. When we shared our results internally, one of the main BBQ developers (who works at Anthropic) asked if we had checked a simple control to verify whether our models were answering questions at all. We found that they weren't—our results were technically unbiased, but they were also completely useless. All evaluations are subject to the failure mode where you overinterpret the quantitative score and delude yourself into thinking that you have made progress when you haven’t.

One size doesn't fit all when it comes to third-party evaluation frameworksRecently, third-parties have been actively developing evaluation suites that can be run on a broad set of models. So far, we have participated in two of these efforts: BIG-bench and Stanford’s Holistic Evaluation of Language Models (HELM). Despite how useful third party evaluations intuitively seem (ideally, they are independent, neutral, and open), both of these projects revealed new challenges.

BIG-bench: The bottom-up approach to sourcing a variety of evaluations

BIG-bench consists of 204 evaluations, contributed by over 450 authors, that span a range of topics from science to social reasoning. We encountered several challenges using this framework:

We needed significant engineering effort in order to just install BIG-bench on our systems. BIG-bench is not "plug and play" in the way MMLU is—it requires even more effort than BBQ to implement.BIG-bench did not scale efficiently; it was challenging to run all 204 evaluations on our models within a reasonable time frame. To speed up BIG-bench would require re-writing it in order to play well with our infrastructure—a significant engineering effort.During implementation, we found bugs in some of the evaluations, notably in the implementation of BBQ-lite, a variant of BBQ. Only after discovering this bug did we realize that we had to spend even more time and energy implementing BBQ ourselves.Determining which tasks were most important and representative would have required running all 204 tasks, validating results, and extensively analyzing output—a substantial research undertaking, even for an organization with significant engineering resources.2While it was a useful exercise to try to implement BIG-Bench, we found it sufficiently unwieldy that we dropped it after this experiment.

HELM: The top-down approach to curating an opinionated set of evaluations

BIG-bench is a "bottom-up" effort where anyone can submit any task with some limited vetting by a group of expert organizers. HELM, on the other hand, takes an opinionated "top-down" approach where experts decide what tasks to evaluate models on. HELM evaluates models across scenarios like reasoning and disinformation using standardized metrics like accuracy, calibration, robustness, and fairness. We provide API access to HELM's developers to run the benchmark on our models. This solves two issues we had with BIG-bench: 1) it requires no significant engineering effort from us, and 2) we can rely on experts to select and interpret specific high quality evaluations.

However, HELM introduces its own challenges. Methods that work well for evaluating other providers’ models do not necessarily work well for our models, and vice versa. For example, Anthropic’s Claude series of models are trained to adhere to a specific text format, which we call the Human/Assistant format. When we internally evaluate our models, we adhere to this specific format. If we do not adhere to this format, sometimes Claude will give uncharacteristic responses, making standardized evaluation metrics less trustworthy. Because HELM needs to maintain consistency with how it prompts other models, it does not use the Human/Assistant format when evaluating our models. This means that HELM gives a misleading impression of Claude’s performance.

Furthermore, HELM iteration time is slow—it can take months to evaluate new models. This makes sense because it is a volunteer engineering effort led by a research university. Faster turnarounds would help us to more quickly understand our models as they rapidly evolve. Finally, HELM requires coordination and communication with external parties. This effort requires time and patience, and both sides are likely understaffed and juggling other demands, which can add to the slow iteration times.

The subjectivity of human evaluationsSo far, we have only discussed evaluations that are similar to simple multiple choice tests; however, AI systems are designed for open-ended dynamic interactions with people. How can we design evaluations that more closely approximate how these models are used in the real world?

A/B tests with crowdworkers

Currently, we mainly (but not entirely) rely on one basic type of human evaluation: we conduct A/B tests on crowdsourcing or contracting platforms where people engage in open-ended dialogue with two models and select the response from Model A or B that is more helpful or harmless. In the case of harmlessness, we encourage crowdworkers to actively red team our models, or to adversarially probe them for harmful outputs. We use the resulting data to rank our models according to how helpful or harmless they are. This approach to evaluation has the benefits of corresponding to a realistic setting (e.g., a conversation vs. a multiple choice exam) and allowing us to rank different models against one another.

However, there are a few limitations of this evaluation method:

These experiments are expensive and time-consuming to run. We need to work with and pay for third-party crowdworker platforms or contractors, build custom web interfaces to our models, design careful instructions for A/B testers, analyze and store the resulting data, and address the myriad known ethical challenges of employing crowdworkers3. In the case of harmlessness testing, our experiments additionally risk exposing people to harmful outputs.Human evaluations can vary significantly depending on the characteristics of the human evaluators. Key factors that may influence someone's assessment include their level of creativity, motivation, and ability to identify potential flaws or issues with the system being tested.There is an inherent tension between helpfulness and harmlessness. A system could avoid harm simply by providing unhelpful responses like "sorry, I can’t help you with that". What is the right balance between helpfulness and harmlessness? What numerical value indicates a model is sufficiently helpful and harmless? What high-level norms or values should we test for above and beyond helpfulness and harmlessness?More work is needed to further the science of human evaluations.

Red teaming for harms related to national security

Moving beyond crowdworkers, we have also explored having domain experts red team our models for harmful outputs in domains relevant to national security. The goal is determining whether and how AI models could create or exacerbate national security risks. We recently piloted a more systematic approach to red teaming models for such risks, which we call frontier threats red teaming.

Frontier threats red teaming involves working with subject matter experts to define high-priority threat models, having experts extensively probe the model to assess whether the system could create or exacerbate national security risks per predefined threat models, and developing repeatable quantitative evaluations and mitigations.

Our early work on frontier threats red teaming revealed additional challenges:

The unique characteristics of the national security context make evaluating models for such threats more challenging than evaluating for other types of harms. National security risks are complicated, require expert and very sensitive knowledge, and depend on real-world scenarios of how actors might cause harm.Red teaming AI systems is presently more art than science; red teamers attempt to elicit concerning behaviors by probing models, but this process is not yet standardized. A robust and repeatable process is critical to ensure that red teaming accurately reflects model capabilities and establishes a shared baseline on which different models can be meaningfully compared.We have found that in certain cases, it is critical to involve red teamers with security clearances due to the nature of the information involved. However, this may limit what information red teamers can share with AI developers outside of classified environments. This could, in turn, limit AI developers’ ability to fully understand and mitigate threats identified by domain experts.Red teaming for certain national security harms could have legal ramifications if a model outputs controlled information. There are open questions around constructing appropriate legal safe harbors to enable red teaming without incurring unintended legal risks.Going forward, red teaming for harms related to national security will require coordination across stakeholders to develop standardized processes, legal safeguards, and secure information sharing protocols that enable us to test these systems without compromising sensitive information.

The ouroboros of model-generated evaluationsAs models start to achieve human-level capabilities, we can also employ them to evaluate themselves. So far, we have found success in using models to generate novel multiple choice evaluations that allow us to screen for a large and diverse variety of troubling behaviors. Using this approach, our AI systems generate evaluations in minutes, compared to the days or months that it takes to develop human-generated evaluations.

However, model-generated evaluations have their own challenges. These include:

We currently rely on humans to verify the accuracy of model-generated evaluations. This inherits all of the challenges of human evaluations described above.We know from our experience with BIG-bench, HELM, BBQ, etc., that our models can contain social biases and can fabricate information. As such, any model-generated evaluation may inherit these undesirable characteristics, which could skew the results in hard-to-disentangle ways.As a counter-example, consider Constitutional AI (CAI), a method in which we replace human red teaming with model-based red teaming in order to train Claude to be more harmless. Despite the usage of models to red team models, we surprisingly find that humans find CAI models to be more harmless than models that were red teamed in advance by humans. Although this is promising, model-generated evaluations remain complicated and deserve deeper study.

Preserving the objectivity of third-party audits while leveraging internal expertiseThe thing that differentiates third-party audits from third-party evaluations is that audits are deeper independent assessments focused on risks, while evaluations take a broader look at capabilities. We have participated in third-party safety evaluations conducted by the Alignment Research Center (ARC), which assesses frontier AI models for dangerous capabilities (e.g., the ability for a model to accumulate resources, make copies of itself, become hard to shut down, etc.). Engaging external experts has the advantage of leveraging specialized domain expertise and increasing the likelihood of an unbiased audit. Initially, we expected this collaboration to be straightforward, but it ended up requiring significant science and engineering support on our end. Providing full-time assistance diverted resources from internal evaluation efforts.

When doing this audit, we realized that the relationship between auditors and those being audited poses challenges that must be navigated carefully. Auditors typically limit details shared with auditees to preserve evaluation integrity. However, without adequate information the evaluated party may struggle to address underlying issues when crafting technical evaluations. In this case, after seeing the final audit report, we realized that we could have helped ARC be more successful in identifying concerning behavior if we had known more details about their (clever and well-designed) audit approach. This is because getting models to perform near the limits of their capabilities is a fundamentally difficult research endeavor. Prompt engineering and fine-tuning language models are active research areas, with most expertise residing within AI companies. With more collaboration, we could have leveraged our deep technical knowledge of our models to help ARC execute the evaluation more effectively.

Policy recommendationsAs we have explored in this post, building meaningful AI evaluations is a challenging enterprise. To advance the science and engineering of evaluations, we propose that policymakers:

Fund and support:

The science of repeatable and useful evaluations. Today there are many open questions about what makes an evaluation useful and high-quality. Governments should fund grants and research programs targeted at computer science departments and organizations dedicated to the development of AI evaluations to study what constitutes a high-quality evaluation and develop robust, replicable methodologies that enable comparative assessments of AI systems. We recommend that governments opt for quality over quantity (i.e., funding the development of one higher-quality evaluation rather than ten lower-quality evaluations).The implementation of existing evaluations. While continually developing new evaluations is useful, enabling the implementation of existing, high-quality evaluations by multiple parties is also important. For example, governments can fund engineering efforts to create easy-to-install and -run software packages for evaluations like BIG-bench and develop version-controlled and standardized dynamic benchmarks that are easy to implement.Analyzing the robustness of existing evaluations. After evaluations are implemented, they require constant monitoring (e.g., to determine whether an evaluation is saturated and no longer useful).Increase funding for government agencies focused on evaluations, such as the National Institute of Standards and Technology (NIST) in the United States. Policymakers should also encourage behavioral norms via a public "AI safety leaderboard" to incentivize private companies performing well on evaluations. This could function similarly to NIST’s Face Recognition Vendor Test (FRVT), which was created to provide independent evaluations of commercially available facial recognition systems. By publishing performance benchmarks, it allowed consumers and regulators to better understand the capabilities and limitations4 of different systems.

Create a legal safe harbor allowing companies to work with governments and third-parties to rigorously evaluate models for national security risks—such as those in the chemical, biological, radiological and nuclear defense (CBRN) domains—without legal repercussions, in the interest of improving safety. This could also include a "responsible disclosure protocol" that enables labs to share sensitive information about identified risks.

ConclusionWe hope that by openly sharing our experiences evaluating our own systems across many different dimensions, we can help people interested in AI policy acknowledge challenges with current model evaluations.</document>

First, find the quotes from the document that are most relevant to answering the question, and then print them in numbered order. Quotes should be relatively short.

If there are no relevant quotes, write "No relevant quotes" instead.

Then, answer the question, starting with "Answer:". Do not include or reference quoted content verbatim in the answer. Don't say "According to Quote [1]" when answering. Instead make references to quotes relevant to each section of the answer solely by adding their bracketed numbers at the end of relevant sentences.

Thus, the format of your overall response should look like what's shown between the <example></example> tags. Make sure to follow the formatting and spacing exactly.

<example>Relevant quotes:[1] "Company X reported revenue of $12 million in 2021."[2] "Almost 90% of revene came from widget sales, with gadget sales making up the remaining 10%."

Answer:Company X earned $12 million. [1] Almost 90% of it was from widget sales. [2]</example>

Here is the first question: In bullet points and simple terms, what are the key challenges in evaluating AI systems?

If the question cannot be answered by the document, say so.

Answer the question immediately without preamble.Usar "chain of thought" para fomentar el razonamiento lógico

Esta técnica le pide al modelo que verifique su trabajo o que justifique por qué dio la respuesta que dio. Chain of thought es una herramienta muy útil para reducir alucinaciones. Las alucinaciones son contenido generado por el modelo que es falso, inexacto o completamente fabricado. Debido al enfoque probabilístico del modelo, el contenido generado puede sonar verídico, pero en realidad ser una alucinación.

Como se ve en este ejemplo, incluimos la instrucción "Double check your work to ensure no errors or inconsistencies". Esto le ayudará al modelo a entregar respuestas más precisas. Sin embargo, no es un enfoque infalible: como ya vimos, estos modelos son probabilísticos y en ciertos casos pueden afirmar que no hay errores cuando sí los hay, o presentar evidencia totalmente ajena a la respuesta dada antes.

Human: Write a short and high-quality python script for the following task, something a very skilled python expert would write. You are writing code for an experienced developer so only add comments for things that are non-obvious. Make sure to include any imports required.

NEVER write anything before the ```python``` block. After you are done generating the code and after the ```python``` block, check your work carefully to make sure there are no mistakes, errors, or inconsistencies. If there are errors, list those errors in <error> tags, then generate a new version with those errors fixed. If there are no errors, write "CHECKED: NO ERRORS" in <error> tags.

Here is the task:<task>A web scraper that extracts data from multiple pages and stores results in a SQLite database. Double check your work to ensure no errors or inconsistencies.</task>Este es otro ejemplo, esta vez para un modelo Llama2. Fíjate en la solicitud de entregar la información paso a paso.

[INST]You are a a very intelligent bot with exceptional critical thinking[/INST]I went to the market and bought 10 apples. I gave 2 apples to your friend and 2 to the helper. I then went and bought 5 more apples and ate 1. How many apples did I remain with?

Let's think step by step.AWS ofrece un sólido conjunto de servicios para crear aplicaciones impulsadas por LLM sin necesidad de entrenar modelos desde cero. En DoiT te ayudamos a sacarle el máximo provecho a estos servicios y herramientas.

Amazon Bedrock

Amazon Bedrock es un servicio totalmente administrado pensado para simplificar la creación y el escalamiento de aplicaciones de IA generativa. Lo hace ofreciendo acceso a una variedad de foundation models de alto desempeño de empresas líderes en IA como AI21 Labs, Anthropic, Cohere, Meta, Mistral AI, Stability AI y Amazon, todos a través de una sola API.

- Elección y personalización del modelo: Los usuarios pueden elegir entre una variedad de foundation models para encontrar el que mejor se adapte a sus necesidades. Además, estos modelos pueden personalizarse de forma privada con datos propios mediante fine-tuning y Retrieval Augmented Generation (RAG), lo que abre la puerta a aplicaciones personalizadas y específicas para cada dominio.

- Experiencia serverless: Amazon Bedrock cuenta con una arquitectura serverless, por lo que los usuarios no tienen que gestionar infraestructura. Esto facilita integrar e implementar capacidades de IA generativa en las aplicaciones usando las herramientas de AWS ya conocidas.

- Seguridad y privacidad: Crear aplicaciones de IA generativa con Bedrock garantiza seguridad, privacidad y apego a los principios de IA responsable. Los usuarios pueden mantener sus datos privados y seguros mientras aprovechan estas capacidades avanzadas de IA.

- Amazon Bedrock Knowledge Base: Permite a los arquitectos potenciar las aplicaciones de IA generativa habilitando el uso de técnicas de retrieval augmented generation (RAG). Posibilita centralizar diversas fuentes de datos en un repositorio único, dándoles a los foundation models y a los agentes acceso a información actualizada y propietaria para entregar respuestas más contextuales y precisas. Esta funcionalidad totalmente administrada simplifica el flujo de RAG, desde la ingesta de datos hasta la augmentación del prompt, sin necesidad de integraciones personalizadas con fuentes de datos. Soporta integración fluida con bases de datos vectoriales populares como Amazon OpenSearch Serverless, Pinecone y Redis Enterprise Cloud, lo que facilita que las organizaciones implementen RAG sin las complicaciones de gestionar la infraestructura subyacente.

- Agents for Amazon Bedrock: Permite a los arquitectos crear y configurar agentes autónomos dentro de las aplicaciones, ayudando a los usuarios finales a completar acciones a partir de los datos de la organización y de la entrada del usuario. Estos agentes orquestan interacciones entre foundation models, fuentes de datos, aplicaciones de software y conversaciones con el usuario. Llaman APIs de forma automática y pueden invocar bases de conocimiento para enriquecer la información de las acciones, lo que reduce de forma significativa el esfuerzo de desarrollo al hacerse cargo de tareas como prompt engineering, manejo de memoria, monitoreo y cifrado.

Sagemaker Jumpstart

Amazon SageMaker JumpStart es un hub de machine learning dentro de Amazon SageMaker que ofrece acceso a modelos preentrenados y plantillas de soluciones para una amplia variedad de tipos de problemas. Permite entrenamiento incremental, ajuste antes del despliegue y simplifica el proceso de desplegar, hacer fine-tuning y evaluar modelos preentrenados de hubs populares. Esto acelera el camino del machine learning al ofrecer soluciones listas y ejemplos para aprender y desarrollar aplicaciones.

Amazon CodeWhisperer

AWS CodeWhisperer es un generador de código impulsado por machine learning que entrega recomendaciones y sugerencias de código en tiempo real, desde comentarios de una sola línea hasta funciones completas, a partir de tus entradas actuales y previas.

Amazon Q

Amazon Q es un asistente impulsado por IA generativa pensado para responder a las necesidades del negocio, y los usuarios pueden adaptarlo a aplicaciones específicas. Facilita la creación, extensión, operación y comprensión de aplicaciones y workloads en AWS, lo que lo convierte en una herramienta versátil para distintas tareas dentro de la organización.