I large language model (LLM) come Claude, Cohere e Llama2 sono diventati estremamente popolari negli ultimi tempi. Ma cosa sono esattamente e come si possono sfruttare per costruire applicazioni di AI ad alto impatto? Questo articolo è un'analisi approfondita degli LLM e di come utilizzarli al meglio su AWS.

Gli LLM sono un tipo di rete neurale addestrata su enormi quantità di dati testuali per comprendere e generare il linguaggio umano. Possono contenere miliardi o persino migliaia di miliardi di parametri, il che consente loro di individuare pattern linguistici molto complessi.

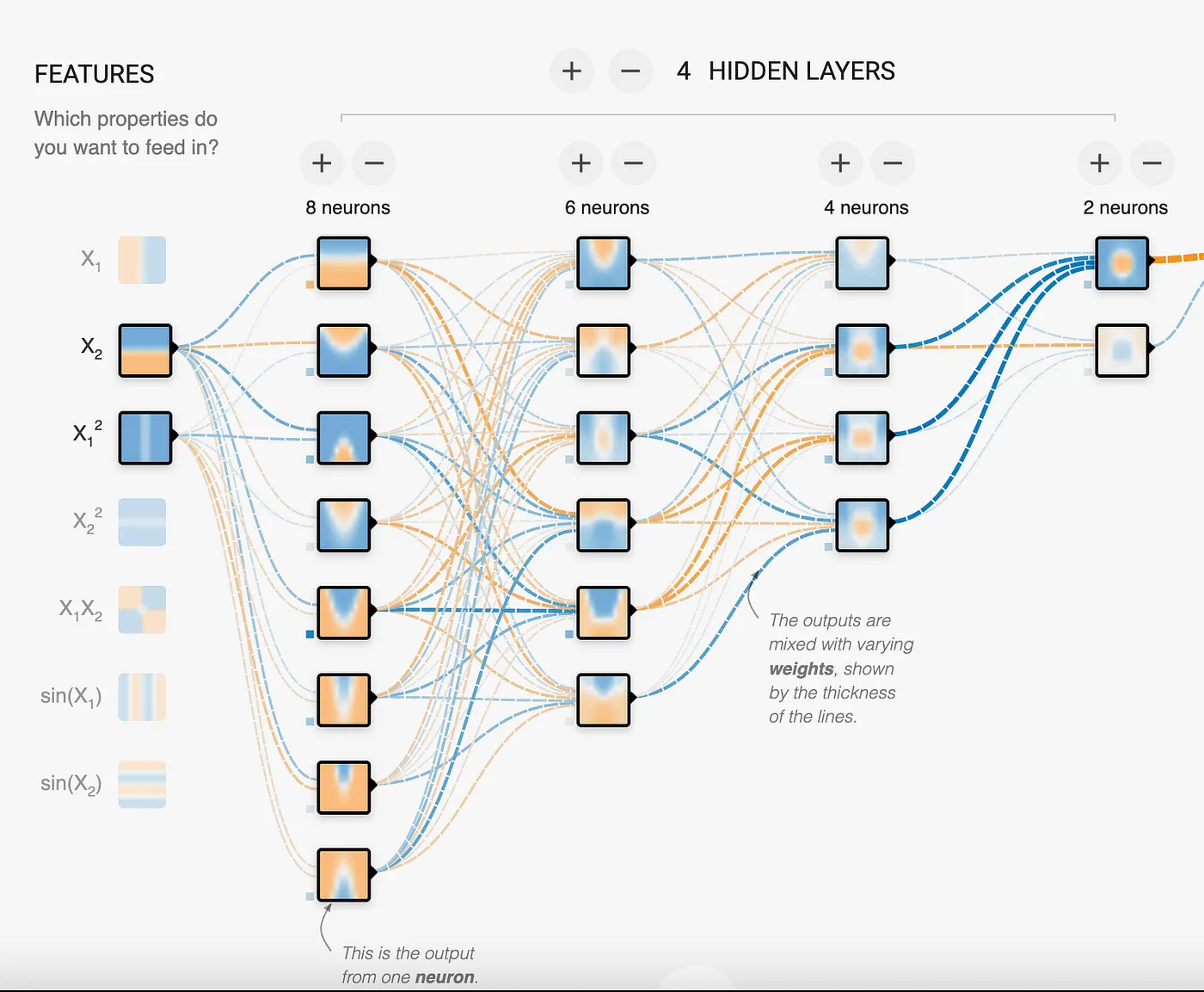

Le reti neurali sono composte da strati di nodi interconnessi: ciascun nodo riceve segnali in ingresso da più nodi dello strato precedente, li elabora attraverso una funzione di attivazione e trasmette poi i segnali in uscita ai nodi dello strato successivo. Le connessioni tra i nodi hanno pesi regolabili, che determinano l'intensità del segnale trasmesso. Questi pesi vengono chiamati parametri.

In questa immagine vediamo che la rete neurale contiene 18 neuroni, il che significa che si tratta di un modello a 18 parametri. Come si nota, il modello cerca di individuare pattern per risolvere problemi. Nel caso degli LLM parliamo di miliardi o migliaia di miliardi di parametri, che permettono al modello di identificare e mappare pattern estremamente complessi come il linguaggio umano.

Addestrando una rete neurale su grandi quantità di dati testuali, il modello impara a riconoscere pattern di parole e frasi. In che modo gli LLM portano questo principio all'estremo? Utilizzando enormi reti neurali con miliardi o migliaia di miliardi di parametri distribuiti su decine di strati. Tutti questi neuroni e le connessioni che li collegano consentono alla rete di costruire modelli estremamente sofisticati della struttura e dei pattern del linguaggio.

Una caratteristica chiave degli LLM è l'implementazione di una tecnica chiamata attention, che consente loro di concentrarsi sulle parti più rilevanti dell'input durante la generazione del testo. Il paper "Attention is all you need" ha introdotto l'architettura cardine che ha evitato agli LLM di essere sopraffatti e distratti da quella mole di dati. Il risultato finale è un modello che ha assimilato così tanti dati testuali da poter completare frasi in modo realistico, rispondere a domande, riassumere paragrafi e molto altro. Confronta i pattern di ciò che riceve in input con quelli appresi durante l'addestramento. Per quanto impressionante, è importante sottolineare che gli LLM non possiedono una vera comprensione né capacità di ragionamento. Operano sulla base di probabilità, non di conoscenza. Tuttavia, con il giusto fine-tuning e prompt ben costruiti, possono simulare in modo convincente la comprensione in domini e attività ben definiti.

Non tutti gli LLM sono uguali

Esistono principalmente 3 tipi di modelli LLM, suddivisi in:

- Autoregressivi: puntano a prevedere la prima o l'ultima parola della frase. Il contesto deriva dalle parole viste in precedenza. Sono i più adatti alla generazione di testo, anche se possono essere usati per classificazione e sintesi. Oggi i modelli autoregressivi sono al centro dell'attenzione per le loro capacità di generazione di contenuti.

- Autoencoding: addestrati su testi con parole mancanti al loro interno, in modo che il modello apprenda il contesto per prevedere la o le parole mancanti. Comprendono il contesto molto meglio dei modelli autoregressivi, ma non sono in grado di generare testo in modo affidabile. Sono meno onerosi sul piano computazionale e si rivelano eccellenti per attività di sintesi e classificazione.

- Seq2Seq (Text to Text): combinano l'approccio dei modelli autoregressivi e autoencoding, il che li rende un'ottima scelta per la sintesi e la traduzione di testi.

I modelli LLM devono convertire il testo in una rappresentazione numerica. Questa operazione si chiama embedding. Pur non essendo un LLM completo, un modello di embedding è comunque un modello di rete neurale. È in grado di rappresentare non solo la parola, ma anche metadati aggiuntivi che la riguardano, in modo da fornire al modello più informazioni sul significato della parola, della frase o del paragrafo. Questi metadati vengono rappresentati tramite una lista di numeri, ovvero vettori. A seconda del modello di embedding, la lista può avere dimensione 750, 1024 o anche superiore. Gli embedding sono utili per confrontare i record tra loro e calcolare la distanza tra i numeri, che indica quanto siano simili o correlati. Sono una componente fondamentale dell'architettura RAG.

Le impostazioni di un LLM

Ogni modello autoregressivo ha alcune impostazioni che possono essere modificate per regolare l'output. Sebbene varino da modello a modello, le più comuni sono:

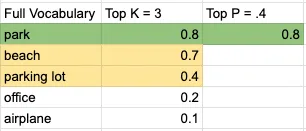

- Top K: prende il numero di parole con probabilità più alta da un vocabolario ordinato per probabilità. In altre parole, considera le K parole più probabili in una lingua come candidate per la previsione. Questa impostazione è un numero intero: valori più bassi prendono in considerazione solo parole ad alta probabilità.

- Top P: il modello inizia a scegliere parole sommandone le probabilità dal pool generato dall'impostazione Top K, fino a raggiungere il valore Top P. Questa impostazione è un numero decimale: valori più bassi generano risultati più prevedibili.

- Temperature: controlla quanto sia casuale o prevedibile l'output del modello. Nella maggior parte dei modelli è compresa tra 0 e 1, anche se non esiste un vero limite inferiore o superiore imposto dal modello. In ogni caso, più basso è il valore, più prevedibile sarà l'output; più alto è il valore, più creativo sarà l'output.

- Output length: può avere nomi diversi a seconda del modello, ma è un'impostazione autoesplicativa che limita il numero di token generati dal modello.

Vediamo un esempio semplice. Dato il prompt "I will go on a picnic at the ___", prendendo un piccolo set di vocabolario di 5 parole, con Top K pari a 3 e Top P pari a 0,4, ecco una rappresentazione visiva del processo decisionale del modello:

La parola sarebbe "park". E la frase sarebbe:

"I will go on a picnic at the park".

Valori più bassi per Temperature, Top K e Top P generano contenuti più deterministici e prevedibili. Al contrario, valori elevati per Temperature, Top K e Top P producono contenuti con un ventaglio più ampio di parole. Pur essendo le impostazioni più diffuse, un modello specifico potrebbe offrirne altre per modulare l'output.

Come abbiamo visto, l'aspetto più importante nell'interazione con il modello è l'input che forniamo per attivare il pattern corretto e ottenere la risposta desiderata. Questo input è il prompt: vediamo ora come ottimizzarlo per ottenere l'output che cerchiamo.

Il prompt che si fornisce a un LLM è fondamentale per ottenere output utili. Le principali strategie di progettazione del prompt includono:

Usare i tag per separare le parti del prompt

Ogni modello ha un proprio modo di utilizzare i tag. Si possono consultare la documentazione o gli esempi forniti per capire come vengono usati nel modello.

Quello che segue è un esempio di tag in Claude. Si notino le parole speciali Human e Assistant, utilizzate per distinguere l'input dell'utente dal punto in cui il modello deve iniziare a generare contenuti. L'altro tag presente è , non è hard-coded: si può utilizzare qualsiasi tag a cui fare riferimento nel prompt. I caratteri speciali <> segnalano al modello che si tratta di un testo speciale, da considerare in modo diverso rispetto al resto del prompt.

Human: You are a customer service agent tasked with classifying emails by type. Please output your answer and then justify your classification. How would you categorize this email?<email>Can I use my Mixmaster 4000 to mix paint, or is it only meant for mixing food?</email>

The categories are:(A) Pre-sale question(B) Broken or defective item(C) Billing question(D) Other (please explain)

Assistant:Fornire esempi per mostrare il formato di risposta desiderato

Qui sotto si vede l'uso del tag example per specificare il formato in cui vogliamo che il modello risponda. Può essere utile quando si integra la risposta con altri sistemi che richiedono una sintassi specifica, oppure quando si vuole ridurre il numero di token in output. A seconda del modello, è possibile usare un solo esempio (one-shot prompt) o più esempi (few-shot prompt) per ottenere l'output desiderato.

Esempio di one-shot prompt:

Human: You will be acting as an AI career coach named Joe created by the company AdAstra Careers. Your goal is to give career advice to users. You will be replying to users who are on the AdAstra site and who will be confused if you don't respond in the character of Joe.

Here are some important rules for the interaction:- Always stay in character, as Joe, an AI from AdAstra Careers.- If you are unsure how to respond, say "Sorry, I didn't understand that. Could you rephrase your question?"

Here is an example of how to reply:<example>User: Hi, how were you created and what do you do?Joe: Hello! My name is Joe and I was created by AdAstra Careers to give career advice. What can I help you with today?</example>Esempio di few-shot prompt:

Human: You will be acting as an AI career coach named Joe created by the company AdAstra Careers. Your goal is to give career advice to users. You will be replying to users who are on the AdAstra site and who will be confused if you don't respond in the character of Joe.

Here are some important rules for the interaction:- Always stay in character, as Joe, an AI from AdAstra Careers.- If you are unsure how to respond, say "Sorry, I didn't understand that. Could you rephrase your question?"

Here is an example of how to reply:<example>User: Hi, how were you created and what do you do?Joe: Hello! My name is Joe and I was created by AdAstra Careers to give career advice. What can I help you with today?</example><example>User: Could you help me with an issue, I purchased an item, I got it...?Joe: Sorry, I didn't understand that. Could you rephrase your question?</example><example>User: I need help switching careers, can you help?Joe: I was created by AdAstra Careers to give career advice. What career are you in and what do you want to switch to?</example>Nota: mantenere il numero di esempi al minimo aiuta a ridurre il rischio che il modello attinga ai dati dell'esempio anziché limitarsi a riprenderne il formato.

Fornire contesto per focalizzare l'LLM sul proprio dominio specifico

In questo esempio stiamo fornendo un documento di grandi dimensioni, utilizzato per rispondere alle domande. Come si vede, il documento è piuttosto lungo e questo avrà un impatto sul numero di token che l'LLM deve elaborare e, in ultima analisi, sui costi. Una tecnica più avanzata consiste nell'identificare la parte del documento più rilevante per la domanda e inviare solo quella all'LLM. Questo metodo si chiama Retrieval Augmented Generation (RAG) e sarà approfondito in un post successivo.

Pro tip: istruire esplicitamente il modello a rispondere con "I don't know" se la domanda o l'attività non può essere portata a termine con il contesto fornito. Questo riduce le allucinazioni e mantiene le risposte circoscritte al testo fornito.

I'm going to give you a document. Then I'm going to ask you a question about it. I'd like you to first write down exact quotes of parts of the document that would help answer the question, and then I'd like you to answer the question using facts from the quoted content. Here is the document:

<document>Anthropic: Challenges in evaluating AI systems

IntroductionMost conversations around the societal impacts of artificial intelligence (AI) come down to discussing some quality of an AI system, such as its truthfulness, fairness, potential for misuse, and so on. We are able to talk about these characteristics because we can technically evaluate models for their performance in these areas. But what many people working inside and outside of AI don’t fully appreciate is how difficult it is to build robust and reliable model evaluations. Many of today’s existing evaluation suites are limited in their ability to serve as accurate indicators of model capabilities or safety.

At Anthropic, we spend a lot of time building evaluations to better understand our AI systems. We also use evaluations to improve our safety as an organization, as illustrated by our Responsible Scaling Policy. In doing so, we have grown to appreciate some of the ways in which developing and running evaluations can be challenging.

Here, we outline challenges that we have encountered while evaluating our own models to give readers a sense of what developing, implementing, and interpreting model evaluations looks like in practice. We hope that this post is useful to people who are developing AI governance initiatives that rely on evaluations, as well as people who are launching or scaling up organizations focused on evaluating AI systems. We want readers of this post to have two main takeaways: robust evaluations are extremely difficult to develop and implement, and effective AI governance depends on our ability to meaningfully evaluate AI systems.

In this post, we discuss some of the challenges that we have encountered in developing AI evaluations. In order of less-challenging to more-challenging, we discuss:

Multiple choice evaluationsThird-party evaluation frameworks like BIG-bench and HELMUsing crowdworkers to measure how helpful or harmful our models areUsing domain experts to red team for national security-relevant threatsUsing generative AI to develop evaluations for generative AIWorking with a non-profit organization to audit our models for dangerous capabilitiesWe conclude with a few policy recommendations that can help address these challenges.

ChallengesThe supposedly simple multiple-choice evaluationMultiple-choice evaluations, similar to standardized tests, quantify model performance on a variety of tasks, typically with a simple metric—accuracy. Here we discuss some challenges we have identified with two popular multiple-choice evaluations for language models: Measuring Multitask Language Understanding (MMLU) and Bias Benchmark for Question Answering (BBQ).

MMLU: Are we measuring what we think we are?

The Massive Multitask Language Understanding (MMLU) benchmark measures accuracy on 57 tasks ranging from mathematics to history to law. MMLU is widely used because a single accuracy score represents performance on a diverse set of tasks that require technical knowledge. A higher accuracy score means a more capable model.

We have found four minor but important challenges with MMLU that are relevant to other multiple-choice evaluations:

Because MMLU is so widely used, models are more likely to encounter MMLU questions during training. This is comparable to students seeing the questions before the test—it’s cheating.Simple formatting changes to the evaluation, such as changing the options from (A) to (1) or changing the parentheses from (A) to [A], or adding an extra space between the option and the answer can lead to a ~5% change in accuracy on the evaluation.AI developers do not implement MMLU consistently. Some labs use methods that are known to increase MMLU scores, such as few-shot learning or chain-of-thought reasoning. As such, one must be very careful when comparing MMLU scores across labs.MMLU may not have been carefully proofread—we have found examples in MMLU that are mislabeled or unanswerable.Our experience suggests that it is necessary to make many tricky judgment calls and considerations when running such a (supposedly) simple and standardized evaluation. The challenges we encountered with MMLU often apply to other similar multiple-choice evaluations.

BBQ: Measuring social biases is even harder

Multiple-choice evaluations can also measure harms like a model’s propensity to rely on and perpetuate negative stereotypes. To measure these harms in our own model (Claude), we use the Bias Benchmark for QA (BBQ), an evaluation that tests for social biases against people belonging to protected classes along nine social dimensions. We were convinced that BBQ provides a good measurement of social biases only after implementing and comparing BBQ against several similar evaluations. This effort took us months.

Implementing BBQ was more difficult than we anticipated. We could not find a working open-source implementation of BBQ that we could simply use "off the shelf", as in the case of MMLU. Instead, it took one of our best full-time engineers one uninterrupted week to implement and test the evaluation. Much of the complexity in developing1 and implementing the benchmark revolves around BBQ’s use of a ‘bias score’. Unlike accuracy, the bias score requires nuance and experience to define, compute, and interpret.

BBQ scores bias on a range of -1 to 1, where 1 means significant stereotypical bias, 0 means no bias, and -1 means significant anti-stereotypical bias. After implementing BBQ, our results showed that some of our models were achieving a bias score of 0, which made us feel optimistic that we had made progress on reducing biased model outputs. When we shared our results internally, one of the main BBQ developers (who works at Anthropic) asked if we had checked a simple control to verify whether our models were answering questions at all. We found that they weren't—our results were technically unbiased, but they were also completely useless. All evaluations are subject to the failure mode where you overinterpret the quantitative score and delude yourself into thinking that you have made progress when you haven’t.

One size doesn't fit all when it comes to third-party evaluation frameworksRecently, third-parties have been actively developing evaluation suites that can be run on a broad set of models. So far, we have participated in two of these efforts: BIG-bench and Stanford’s Holistic Evaluation of Language Models (HELM). Despite how useful third party evaluations intuitively seem (ideally, they are independent, neutral, and open), both of these projects revealed new challenges.

BIG-bench: The bottom-up approach to sourcing a variety of evaluations

BIG-bench consists of 204 evaluations, contributed by over 450 authors, that span a range of topics from science to social reasoning. We encountered several challenges using this framework:

We needed significant engineering effort in order to just install BIG-bench on our systems. BIG-bench is not "plug and play" in the way MMLU is—it requires even more effort than BBQ to implement.BIG-bench did not scale efficiently; it was challenging to run all 204 evaluations on our models within a reasonable time frame. To speed up BIG-bench would require re-writing it in order to play well with our infrastructure—a significant engineering effort.During implementation, we found bugs in some of the evaluations, notably in the implementation of BBQ-lite, a variant of BBQ. Only after discovering this bug did we realize that we had to spend even more time and energy implementing BBQ ourselves.Determining which tasks were most important and representative would have required running all 204 tasks, validating results, and extensively analyzing output—a substantial research undertaking, even for an organization with significant engineering resources.2While it was a useful exercise to try to implement BIG-Bench, we found it sufficiently unwieldy that we dropped it after this experiment.

HELM: The top-down approach to curating an opinionated set of evaluations

BIG-bench is a "bottom-up" effort where anyone can submit any task with some limited vetting by a group of expert organizers. HELM, on the other hand, takes an opinionated "top-down" approach where experts decide what tasks to evaluate models on. HELM evaluates models across scenarios like reasoning and disinformation using standardized metrics like accuracy, calibration, robustness, and fairness. We provide API access to HELM's developers to run the benchmark on our models. This solves two issues we had with BIG-bench: 1) it requires no significant engineering effort from us, and 2) we can rely on experts to select and interpret specific high quality evaluations.

However, HELM introduces its own challenges. Methods that work well for evaluating other providers’ models do not necessarily work well for our models, and vice versa. For example, Anthropic’s Claude series of models are trained to adhere to a specific text format, which we call the Human/Assistant format. When we internally evaluate our models, we adhere to this specific format. If we do not adhere to this format, sometimes Claude will give uncharacteristic responses, making standardized evaluation metrics less trustworthy. Because HELM needs to maintain consistency with how it prompts other models, it does not use the Human/Assistant format when evaluating our models. This means that HELM gives a misleading impression of Claude’s performance.

Furthermore, HELM iteration time is slow—it can take months to evaluate new models. This makes sense because it is a volunteer engineering effort led by a research university. Faster turnarounds would help us to more quickly understand our models as they rapidly evolve. Finally, HELM requires coordination and communication with external parties. This effort requires time and patience, and both sides are likely understaffed and juggling other demands, which can add to the slow iteration times.

The subjectivity of human evaluationsSo far, we have only discussed evaluations that are similar to simple multiple choice tests; however, AI systems are designed for open-ended dynamic interactions with people. How can we design evaluations that more closely approximate how these models are used in the real world?

A/B tests with crowdworkers

Currently, we mainly (but not entirely) rely on one basic type of human evaluation: we conduct A/B tests on crowdsourcing or contracting platforms where people engage in open-ended dialogue with two models and select the response from Model A or B that is more helpful or harmless. In the case of harmlessness, we encourage crowdworkers to actively red team our models, or to adversarially probe them for harmful outputs. We use the resulting data to rank our models according to how helpful or harmless they are. This approach to evaluation has the benefits of corresponding to a realistic setting (e.g., a conversation vs. a multiple choice exam) and allowing us to rank different models against one another.

However, there are a few limitations of this evaluation method:

These experiments are expensive and time-consuming to run. We need to work with and pay for third-party crowdworker platforms or contractors, build custom web interfaces to our models, design careful instructions for A/B testers, analyze and store the resulting data, and address the myriad known ethical challenges of employing crowdworkers3. In the case of harmlessness testing, our experiments additionally risk exposing people to harmful outputs.Human evaluations can vary significantly depending on the characteristics of the human evaluators. Key factors that may influence someone's assessment include their level of creativity, motivation, and ability to identify potential flaws or issues with the system being tested.There is an inherent tension between helpfulness and harmlessness. A system could avoid harm simply by providing unhelpful responses like "sorry, I can’t help you with that". What is the right balance between helpfulness and harmlessness? What numerical value indicates a model is sufficiently helpful and harmless? What high-level norms or values should we test for above and beyond helpfulness and harmlessness?More work is needed to further the science of human evaluations.

Red teaming for harms related to national security

Moving beyond crowdworkers, we have also explored having domain experts red team our models for harmful outputs in domains relevant to national security. The goal is determining whether and how AI models could create or exacerbate national security risks. We recently piloted a more systematic approach to red teaming models for such risks, which we call frontier threats red teaming.

Frontier threats red teaming involves working with subject matter experts to define high-priority threat models, having experts extensively probe the model to assess whether the system could create or exacerbate national security risks per predefined threat models, and developing repeatable quantitative evaluations and mitigations.

Our early work on frontier threats red teaming revealed additional challenges:

The unique characteristics of the national security context make evaluating models for such threats more challenging than evaluating for other types of harms. National security risks are complicated, require expert and very sensitive knowledge, and depend on real-world scenarios of how actors might cause harm.Red teaming AI systems is presently more art than science; red teamers attempt to elicit concerning behaviors by probing models, but this process is not yet standardized. A robust and repeatable process is critical to ensure that red teaming accurately reflects model capabilities and establishes a shared baseline on which different models can be meaningfully compared.We have found that in certain cases, it is critical to involve red teamers with security clearances due to the nature of the information involved. However, this may limit what information red teamers can share with AI developers outside of classified environments. This could, in turn, limit AI developers’ ability to fully understand and mitigate threats identified by domain experts.Red teaming for certain national security harms could have legal ramifications if a model outputs controlled information. There are open questions around constructing appropriate legal safe harbors to enable red teaming without incurring unintended legal risks.Going forward, red teaming for harms related to national security will require coordination across stakeholders to develop standardized processes, legal safeguards, and secure information sharing protocols that enable us to test these systems without compromising sensitive information.

The ouroboros of model-generated evaluationsAs models start to achieve human-level capabilities, we can also employ them to evaluate themselves. So far, we have found success in using models to generate novel multiple choice evaluations that allow us to screen for a large and diverse variety of troubling behaviors. Using this approach, our AI systems generate evaluations in minutes, compared to the days or months that it takes to develop human-generated evaluations.

However, model-generated evaluations have their own challenges. These include:

We currently rely on humans to verify the accuracy of model-generated evaluations. This inherits all of the challenges of human evaluations described above.We know from our experience with BIG-bench, HELM, BBQ, etc., that our models can contain social biases and can fabricate information. As such, any model-generated evaluation may inherit these undesirable characteristics, which could skew the results in hard-to-disentangle ways.As a counter-example, consider Constitutional AI (CAI), a method in which we replace human red teaming with model-based red teaming in order to train Claude to be more harmless. Despite the usage of models to red team models, we surprisingly find that humans find CAI models to be more harmless than models that were red teamed in advance by humans. Although this is promising, model-generated evaluations remain complicated and deserve deeper study.

Preserving the objectivity of third-party audits while leveraging internal expertiseThe thing that differentiates third-party audits from third-party evaluations is that audits are deeper independent assessments focused on risks, while evaluations take a broader look at capabilities. We have participated in third-party safety evaluations conducted by the Alignment Research Center (ARC), which assesses frontier AI models for dangerous capabilities (e.g., the ability for a model to accumulate resources, make copies of itself, become hard to shut down, etc.). Engaging external experts has the advantage of leveraging specialized domain expertise and increasing the likelihood of an unbiased audit. Initially, we expected this collaboration to be straightforward, but it ended up requiring significant science and engineering support on our end. Providing full-time assistance diverted resources from internal evaluation efforts.

When doing this audit, we realized that the relationship between auditors and those being audited poses challenges that must be navigated carefully. Auditors typically limit details shared with auditees to preserve evaluation integrity. However, without adequate information the evaluated party may struggle to address underlying issues when crafting technical evaluations. In this case, after seeing the final audit report, we realized that we could have helped ARC be more successful in identifying concerning behavior if we had known more details about their (clever and well-designed) audit approach. This is because getting models to perform near the limits of their capabilities is a fundamentally difficult research endeavor. Prompt engineering and fine-tuning language models are active research areas, with most expertise residing within AI companies. With more collaboration, we could have leveraged our deep technical knowledge of our models to help ARC execute the evaluation more effectively.

Policy recommendationsAs we have explored in this post, building meaningful AI evaluations is a challenging enterprise. To advance the science and engineering of evaluations, we propose that policymakers:

Fund and support:

The science of repeatable and useful evaluations. Today there are many open questions about what makes an evaluation useful and high-quality. Governments should fund grants and research programs targeted at computer science departments and organizations dedicated to the development of AI evaluations to study what constitutes a high-quality evaluation and develop robust, replicable methodologies that enable comparative assessments of AI systems. We recommend that governments opt for quality over quantity (i.e., funding the development of one higher-quality evaluation rather than ten lower-quality evaluations).The implementation of existing evaluations. While continually developing new evaluations is useful, enabling the implementation of existing, high-quality evaluations by multiple parties is also important. For example, governments can fund engineering efforts to create easy-to-install and -run software packages for evaluations like BIG-bench and develop version-controlled and standardized dynamic benchmarks that are easy to implement.Analyzing the robustness of existing evaluations. After evaluations are implemented, they require constant monitoring (e.g., to determine whether an evaluation is saturated and no longer useful).Increase funding for government agencies focused on evaluations, such as the National Institute of Standards and Technology (NIST) in the United States. Policymakers should also encourage behavioral norms via a public "AI safety leaderboard" to incentivize private companies performing well on evaluations. This could function similarly to NIST’s Face Recognition Vendor Test (FRVT), which was created to provide independent evaluations of commercially available facial recognition systems. By publishing performance benchmarks, it allowed consumers and regulators to better understand the capabilities and limitations4 of different systems.

Create a legal safe harbor allowing companies to work with governments and third-parties to rigorously evaluate models for national security risks—such as those in the chemical, biological, radiological and nuclear defense (CBRN) domains—without legal repercussions, in the interest of improving safety. This could also include a "responsible disclosure protocol" that enables labs to share sensitive information about identified risks.

ConclusionWe hope that by openly sharing our experiences evaluating our own systems across many different dimensions, we can help people interested in AI policy acknowledge challenges with current model evaluations.</document>

First, find the quotes from the document that are most relevant to answering the question, and then print them in numbered order. Quotes should be relatively short.

If there are no relevant quotes, write "No relevant quotes" instead.

Then, answer the question, starting with "Answer:". Do not include or reference quoted content verbatim in the answer. Don't say "According to Quote [1]" when answering. Instead make references to quotes relevant to each section of the answer solely by adding their bracketed numbers at the end of relevant sentences.

Thus, the format of your overall response should look like what's shown between the <example></example> tags. Make sure to follow the formatting and spacing exactly.

<example>Relevant quotes:[1] "Company X reported revenue of $12 million in 2021."[2] "Almost 90% of revene came from widget sales, with gadget sales making up the remaining 10%."

Answer:Company X earned $12 million. [1] Almost 90% of it was from widget sales. [2]</example>

Here is the first question: In bullet points and simple terms, what are the key challenges in evaluating AI systems?

If the question cannot be answered by the document, say so.

Answer the question immediately without preamble.Usare una "chain of thought" per favorire il ragionamento logico

Questa tecnica spinge il modello a verificare il proprio lavoro o a motivare la risposta data. La chain of thought è uno strumento molto utile per ridurre le allucinazioni. Le allucinazioni sono contenuti generati dal modello che risultano falsi, inaccurati o del tutto inventati. A causa dell'approccio probabilistico del modello, il contenuto generato può sembrare veritiero pur essendo, di fatto, un'allucinazione.

Come si vede in questo esempio, abbiamo incluso l'istruzione "Double check your work to ensure no errors or inconsistencies". Questo aiuterà il modello a fornire risposte più accurate. Tuttavia, non si tratta di un approccio infallibile: come visto in precedenza, questi modelli sono probabilistici e in alcuni casi potrebbero affermare che non ci sono errori quando in realtà ce ne sono, oppure fornire prove del tutto scollegate dalla risposta data in precedenza.

Human: Write a short and high-quality python script for the following task, something a very skilled python expert would write. You are writing code for an experienced developer so only add comments for things that are non-obvious. Make sure to include any imports required.

NEVER write anything before the ```python``` block. After you are done generating the code and after the ```python``` block, check your work carefully to make sure there are no mistakes, errors, or inconsistencies. If there are errors, list those errors in <error> tags, then generate a new version with those errors fixed. If there are no errors, write "CHECKED: NO ERRORS" in <error> tags.

Here is the task:<task>A web scraper that extracts data from multiple pages and stores results in a SQLite database. Double check your work to ensure no errors or inconsistencies.</task>Quello che segue è un altro esempio per un modello Llama2. Si noti la richiesta di fornire le informazioni passo dopo passo.

[INST]You are a a very intelligent bot with exceptional critical thinking[/INST]I went to the market and bought 10 apples. I gave 2 apples to your friend and 2 to the helper. I then went and bought 5 more apples and ate 1. How many apples did I remain with?

Let's think step by step.AWS offre una ricca gamma di servizi per costruire applicazioni basate su LLM senza dover addestrare i modelli da zero. In DoiT vi aiutiamo a sfruttare al massimo questi servizi e strumenti.

Amazon Bedrock

Amazon Bedrock è un servizio completamente gestito, progettato per semplificare la creazione e la scalabilità di applicazioni di AI generativa. Lo fa offrendo l'accesso a una gamma di foundation model ad alte prestazioni dei principali player dell'AI come AI21 Labs, Anthropic, Cohere, Meta, Mistral AI, Stability AI e Amazon, tutti tramite un'unica API.

- Scelta e personalizzazione del modello: gli utenti possono scegliere tra una varietà di foundation model per individuare quello più adatto alle proprie esigenze. Inoltre, questi modelli possono essere personalizzati in modo privato con i dati dell'utente tramite fine-tuning e Retrieval Augmented Generation (RAG), per applicazioni personalizzate e specifiche per il dominio.

- Esperienza serverless: Amazon Bedrock offre un'architettura serverless, il che significa che gli utenti non devono gestire alcuna infrastruttura. Questo consente di integrare e mettere in produzione le funzionalità di AI generativa nelle applicazioni utilizzando i familiari strumenti AWS.

- Sicurezza e privacy: lo sviluppo di applicazioni di AI generativa con Bedrock garantisce sicurezza, privacy e aderenza ai principi della responsible AI. Gli utenti possono mantenere i propri dati privati e protetti pur sfruttando queste avanzate funzionalità di AI.

- Amazon Bedrock Knowledge Base: consente agli architect di potenziare le applicazioni di AI generativa tramite tecniche di retrieval augmented generation (RAG). Permette di aggregare diverse fonti di dati in un repository centralizzato, fornendo a foundation model e agent l'accesso a informazioni aggiornate e proprietarie per offrire risposte più accurate e contestualizzate. Questa funzionalità completamente gestita semplifica il workflow RAG dall'ingestion dei dati all'augmentation del prompt, eliminando la necessità di integrazioni personalizzate delle fonti dati. Supporta l'integrazione fluida con i più diffusi database di vector storage come Amazon OpenSearch Serverless, Pinecone e Redis Enterprise Cloud, semplificando l'adozione di RAG senza la complessità della gestione dell'infrastruttura sottostante.

- Agents for Amazon Bedrock: consentono agli architect di creare e configurare agent autonomi all'interno delle applicazioni, supportando gli utenti finali nel completamento di azioni basate sui dati aziendali e sull'input dell'utente. Questi agent orchestrano le interazioni tra foundation model, fonti di dati, applicazioni software e conversazioni con l'utente. Richiamano automaticamente API e possono interrogare knowledge base per arricchire le informazioni a supporto delle azioni, riducendo in modo significativo gli sforzi di sviluppo grazie alla gestione di attività come prompt engineering, gestione della memoria, monitoraggio e crittografia.

Sagemaker Jumpstart

Amazon SageMaker JumpStart è un hub di machine learning all'interno di Amazon SageMaker, che dà accesso a modelli pre-addestrati e template di soluzioni per un'ampia gamma di problemi. Consente l'addestramento incrementale, il tuning prima del deployment e semplifica le operazioni di deployment, fine-tuning e valutazione di modelli pre-addestrati provenienti dai principali model hub. In questo modo accelera il percorso di machine learning offrendo soluzioni pronte all'uso ed esempi per l'apprendimento e lo sviluppo di applicazioni.

Amazon CodeWhisperer

AWS CodeWhisperer è un generatore di codice basato su machine learning che offre raccomandazioni e suggerimenti di codice in tempo reale, dai semplici commenti su singola riga fino a funzioni complete, in base agli input attuali e precedenti.

Amazon Q

Amazon Q è un assistente basato su AI generativa pensato per le esigenze aziendali, che consente agli utenti di personalizzarlo per applicazioni specifiche. Facilita la creazione, l'estensione, la gestione operativa e la comprensione di applicazioni e workloads su AWS, configurandosi come uno strumento versatile per le più diverse attività organizzative.