Os large language models (LLMs) como Claude, Cohere e Llama2 viraram febre nos últimos tempos. Mas o que são, afinal, e como você pode usá-los para criar aplicações de IA que realmente entregam valor? Este artigo é um mergulho a fundo nos LLMs e em como tirar o máximo deles na AWS.

Os LLMs são um tipo de Rede Neural treinada com volumes massivos de dados textuais para entender e gerar linguagem humana. Eles podem ter bilhões ou até trilhões de parâmetros, o que permite identificar padrões muito sutis na linguagem.

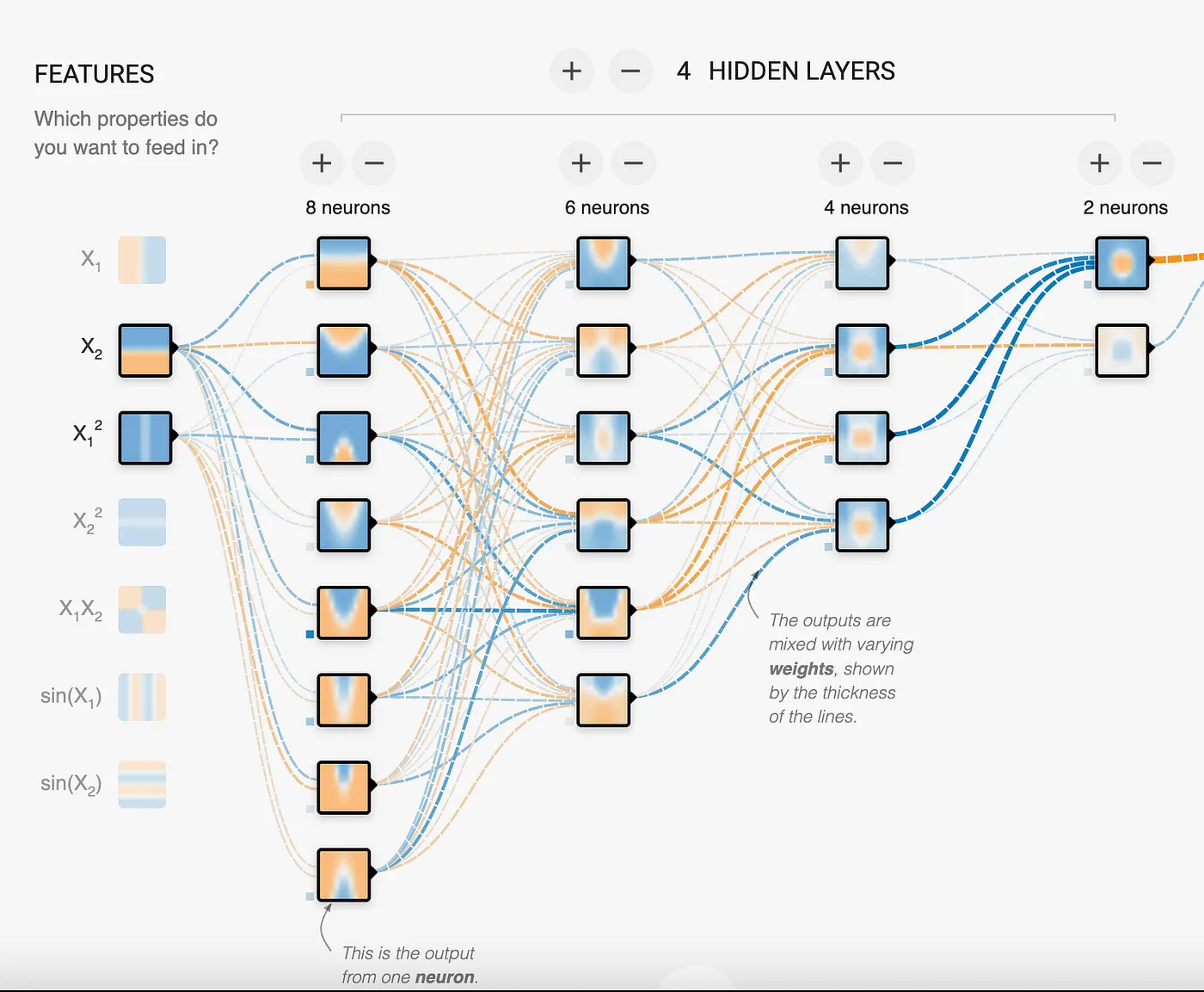

As Redes Neurais são formadas por camadas de nós interconectados. Cada nó recebe sinais de entrada de vários nós da camada anterior, processa esses sinais com uma função de ativação e transmite os sinais de saída aos nós da camada seguinte. As conexões entre os nós têm pesos ajustáveis, que definem a intensidade do sinal transmitido. Esses pesos são chamados de parâmetros.

Na imagem, dá para ver que há 18 neurônios na Rede Neural, ou seja, é um modelo de 18 parâmetros. Como se percebe, o modelo está tentando encontrar padrões para resolver problemas. No caso dos LLMs, falamos de bilhões a trilhões de parâmetros, o que permite ao modelo identificar e mapear padrões muito complexos, como a linguagem humana.

Ao treinar uma Rede Neural com muitos dados de texto, o modelo aprende a reconhecer padrões de palavras e frases. E como os LLMs levam isso ao extremo? Usando Redes Neurais enormes, com bilhões ou trilhões de parâmetros distribuídos em dezenas de camadas. Todos esses neurônios e as conexões entre eles permitem à rede construir modelos extremamente sofisticados sobre a estrutura e os padrões da linguagem.

Uma característica central dos LLMs é o uso de uma técnica chamada attention, que faz com que o modelo se concentre nas partes mais relevantes da entrada ao gerar texto. O artigo "Attention is all you need" trouxe a arquitetura decisiva que evita que os LLMs se percam ou se distraiam diante de tantos dados. O resultado é um modelo que absorveu tanto texto que consegue, de forma convincente, completar frases, responder perguntas, resumir parágrafos e muito mais. Ele cruza os padrões do que você fornece com os padrões que aprendeu durante o treinamento. Por mais impressionante que seja, é importante lembrar que os LLMs não têm verdadeira compreensão nem capacidade de raciocínio. Eles operam com base em probabilidades, não em conhecimento. Mas, com fine-tuning e prompting bem feitos, conseguem simular compreensão de forma convincente em domínios e tarefas específicos.

Nem todo LLM é igual

Existem basicamente 3 tipos de modelos LLM, divididos em:

- Autorregressivo: tem como objetivo prever a primeira ou a última palavra de uma frase. O contexto vem das palavras vistas anteriormente. São os melhores para geração de texto, mas também podem ser usados para classificação e sumarização. Hoje, os modelos autorregressivos são o centro das atenções por sua capacidade de gerar conteúdo.

- Autoencoding: treinados em textos com palavras faltando no meio, o que faz o modelo aprender o contexto para prever a(s) palavra(s) ausente(s). Entendem o contexto muito melhor que os autorregressivos, mas não conseguem gerar texto de forma confiável. Exigem menos poder de computação e são ótimos em tarefas de sumarização e classificação.

- Seq2Seq (Texto para Texto): combinam as abordagens dos modelos autorregressivos e de autoencoding, o que os torna uma ótima opção para sumarização e tradução de texto.

Os modelos LLM precisam converter texto em uma representação numérica. Essa ação do modelo se chama embedding. Embora um modelo de embedding não seja um LLM completo, ele é um modelo de rede neural. Esse modelo consegue representar não só a palavra, mas também metadados adicionais sobre ela, ajudando a ter mais informações sobre o que a palavra, frase ou parágrafo significam. A representação desses metadados é feita por uma lista de números ou vetores. Dependendo do modelo de embedding, a lista pode ter tamanho 750, 1.024 ou ainda maior. Os embeddings são úteis para comparar registros entre si e calcular a distância entre os números, o que pode indicar o quão semelhantes ou relacionados eles são. Os embeddings são uma parte essencial da arquitetura RAG.

Configurações em um LLM

Todo modelo autorregressivo tem certas configurações que podem ser ajustadas para alterar a saída do modelo. Embora as configurações variem de modelo para modelo, as mais comuns são:

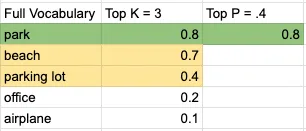

- Top K: considera as palavras com maior probabilidade dentro de um vocabulário ordenado por probabilidade. Em outras palavras, pega as K palavras mais prováveis de um idioma como candidatas à predição. É um número inteiro: valores menores consideram apenas palavras de alta probabilidade.

- Top P: o modelo começa a escolher palavras e a somar suas probabilidades a partir do conjunto gerado pelo Top K, até atingir o valor de Top P. É um número decimal: valores menores geram resultados mais previsíveis.

- Temperature: controla o quão aleatória ou previsível é a saída do modelo. Na maioria dos modelos fica entre 0 e 1, embora não exista um limite mínimo ou máximo realmente imposto. De qualquer forma, quanto menor o número, mais previsível a saída; quanto maior, mais criativa.

- Output length: pode ter nomes diferentes em cada modelo, mas é autoexplicativa: limita o número de tokens que o modelo gera.

Vamos a um exemplo simples. Considere o prompt "I will go on a picnic at the ___". Tomando um vocabulário pequeno de 5 palavras, com Top K igual a 3 e Top P igual a 0,4, a tomada de decisão do modelo ficaria assim:

A palavra escolhida seria "park". E a frase ficaria:

"I will go on a picnic at the park".

Valores mais baixos para Temperature, Top K e Top P geram conteúdo mais determinístico e previsível. Já valores altos resultam em conteúdo com uma variedade maior de palavras. Embora essas sejam as configurações mais conhecidas, um modelo específico pode oferecer outras para manipular a saída.

Como vimos, o mais importante ao interagir com o modelo é a entrada que fornecemos para acionar o padrão certo e, no fim das contas, obter a resposta desejada. Essa entrada é o prompt, e vamos ver como otimizá-lo para conseguir o resultado que queremos.

O prompt que você fornece a um LLM é fundamental para obter saídas úteis. As principais estratégias de design de prompt incluem:

Use tags para separar partes do prompt

Cada modelo tem uma forma diferente de usar tags. Consulte a documentação ou os exemplos disponíveis para entender como as tags funcionam no modelo.

A seguir, um exemplo de tags no Claude. Repare nas palavras especiais Human e Assistant, que servem para diferenciar a entrada do usuário e indicar onde o modelo deve começar a gerar conteúdo. A outra tag aqui é , que não é fixa: você pode usar qualquer tag que seja referenciada no prompt. Os caracteres especiais <> sinalizam ao modelo que se trata de um texto especial, que deve ser tratado de forma diferente do restante do prompt.

Human: You are a customer service agent tasked with classifying emails by type. Please output your answer and then justify your classification. How would you categorize this email?<email>Can I use my Mixmaster 4000 to mix paint, or is it only meant for mixing food?</email>

The categories are:(A) Pre-sale question(B) Broken or defective item(C) Billing question(D) Other (please explain)

Assistant:Forneça exemplos para demonstrar o formato de resposta desejado

Abaixo, você vê o uso da tag example para especificar o formato em que queremos que o modelo responda. Isso é útil quando você integra a resposta com outros sistemas que exigem uma sintaxe específica, ou quando quer reduzir o número de tokens de saída. Dependendo do modelo, dá para usar um exemplo (one-shot prompt) ou vários exemplos (few-shots prompt) para atingir a saída desejada.

Exemplo de one-shot prompt:

Human: You will be acting as an AI career coach named Joe created by the company AdAstra Careers. Your goal is to give career advice to users. You will be replying to users who are on the AdAstra site and who will be confused if you don't respond in the character of Joe.

Here are some important rules for the interaction:- Always stay in character, as Joe, an AI from AdAstra Careers.- If you are unsure how to respond, say "Sorry, I didn't understand that. Could you rephrase your question?"

Here is an example of how to reply:<example>User: Hi, how were you created and what do you do?Joe: Hello! My name is Joe and I was created by AdAstra Careers to give career advice. What can I help you with today?</example>Exemplo de few-shot prompt:

Human: You will be acting as an AI career coach named Joe created by the company AdAstra Careers. Your goal is to give career advice to users. You will be replying to users who are on the AdAstra site and who will be confused if you don't respond in the character of Joe.

Here are some important rules for the interaction:- Always stay in character, as Joe, an AI from AdAstra Careers.- If you are unsure how to respond, say "Sorry, I didn't understand that. Could you rephrase your question?"

Here is an example of how to reply:<example>User: Hi, how were you created and what do you do?Joe: Hello! My name is Joe and I was created by AdAstra Careers to give career advice. What can I help you with today?</example><example>User: Could you help me with an issue, I purchased an item, I got it...?Joe: Sorry, I didn't understand that. Could you rephrase your question?</example><example>User: I need help switching careers, can you help?Joe: I was created by AdAstra Careers to give career advice. What career are you in and what do you want to switch to?</example>Observação: mantenha o número de exemplos no mínimo necessário. Isso reduz a chance de o modelo usar dados do exemplo em vez de seguir apenas o formato.

Forneça contexto para focar o LLM no seu domínio específico

Neste exemplo, fornecemos um documento extenso, usado para responder perguntas. Como dá para ver, o documento é bem longo, e isso impacta o número de tokens que o LLM precisa processar e, no fim, o custo. Uma técnica mais avançada seria identificar a parte do documento mais relevante para a pergunta e enviar apenas essa parte ao LLM. Esse método se chama Retrieval Augmented Generation (RAG) e será explorado em um post posterior.

Dica de especialista: instrua explicitamente o modelo a responder "I don't know" caso a pergunta ou tarefa não possa ser atendida com o contexto fornecido. Isso reduz alucinações e mantém o escopo das respostas dentro do texto fornecido.

I'm going to give you a document. Then I'm going to ask you a question about it. I'd like you to first write down exact quotes of parts of the document that would help answer the question, and then I'd like you to answer the question using facts from the quoted content. Here is the document:

<document>Anthropic: Challenges in evaluating AI systems

IntroductionMost conversations around the societal impacts of artificial intelligence (AI) come down to discussing some quality of an AI system, such as its truthfulness, fairness, potential for misuse, and so on. We are able to talk about these characteristics because we can technically evaluate models for their performance in these areas. But what many people working inside and outside of AI don’t fully appreciate is how difficult it is to build robust and reliable model evaluations. Many of today’s existing evaluation suites are limited in their ability to serve as accurate indicators of model capabilities or safety.

At Anthropic, we spend a lot of time building evaluations to better understand our AI systems. We also use evaluations to improve our safety as an organization, as illustrated by our Responsible Scaling Policy. In doing so, we have grown to appreciate some of the ways in which developing and running evaluations can be challenging.

Here, we outline challenges that we have encountered while evaluating our own models to give readers a sense of what developing, implementing, and interpreting model evaluations looks like in practice. We hope that this post is useful to people who are developing AI governance initiatives that rely on evaluations, as well as people who are launching or scaling up organizations focused on evaluating AI systems. We want readers of this post to have two main takeaways: robust evaluations are extremely difficult to develop and implement, and effective AI governance depends on our ability to meaningfully evaluate AI systems.

In this post, we discuss some of the challenges that we have encountered in developing AI evaluations. In order of less-challenging to more-challenging, we discuss:

Multiple choice evaluationsThird-party evaluation frameworks like BIG-bench and HELMUsing crowdworkers to measure how helpful or harmful our models areUsing domain experts to red team for national security-relevant threatsUsing generative AI to develop evaluations for generative AIWorking with a non-profit organization to audit our models for dangerous capabilitiesWe conclude with a few policy recommendations that can help address these challenges.

ChallengesThe supposedly simple multiple-choice evaluationMultiple-choice evaluations, similar to standardized tests, quantify model performance on a variety of tasks, typically with a simple metric—accuracy. Here we discuss some challenges we have identified with two popular multiple-choice evaluations for language models: Measuring Multitask Language Understanding (MMLU) and Bias Benchmark for Question Answering (BBQ).

MMLU: Are we measuring what we think we are?

The Massive Multitask Language Understanding (MMLU) benchmark measures accuracy on 57 tasks ranging from mathematics to history to law. MMLU is widely used because a single accuracy score represents performance on a diverse set of tasks that require technical knowledge. A higher accuracy score means a more capable model.

We have found four minor but important challenges with MMLU that are relevant to other multiple-choice evaluations:

Because MMLU is so widely used, models are more likely to encounter MMLU questions during training. This is comparable to students seeing the questions before the test—it’s cheating.Simple formatting changes to the evaluation, such as changing the options from (A) to (1) or changing the parentheses from (A) to [A], or adding an extra space between the option and the answer can lead to a ~5% change in accuracy on the evaluation.AI developers do not implement MMLU consistently. Some labs use methods that are known to increase MMLU scores, such as few-shot learning or chain-of-thought reasoning. As such, one must be very careful when comparing MMLU scores across labs.MMLU may not have been carefully proofread—we have found examples in MMLU that are mislabeled or unanswerable.Our experience suggests that it is necessary to make many tricky judgment calls and considerations when running such a (supposedly) simple and standardized evaluation. The challenges we encountered with MMLU often apply to other similar multiple-choice evaluations.

BBQ: Measuring social biases is even harder

Multiple-choice evaluations can also measure harms like a model’s propensity to rely on and perpetuate negative stereotypes. To measure these harms in our own model (Claude), we use the Bias Benchmark for QA (BBQ), an evaluation that tests for social biases against people belonging to protected classes along nine social dimensions. We were convinced that BBQ provides a good measurement of social biases only after implementing and comparing BBQ against several similar evaluations. This effort took us months.

Implementing BBQ was more difficult than we anticipated. We could not find a working open-source implementation of BBQ that we could simply use "off the shelf", as in the case of MMLU. Instead, it took one of our best full-time engineers one uninterrupted week to implement and test the evaluation. Much of the complexity in developing1 and implementing the benchmark revolves around BBQ’s use of a ‘bias score’. Unlike accuracy, the bias score requires nuance and experience to define, compute, and interpret.

BBQ scores bias on a range of -1 to 1, where 1 means significant stereotypical bias, 0 means no bias, and -1 means significant anti-stereotypical bias. After implementing BBQ, our results showed that some of our models were achieving a bias score of 0, which made us feel optimistic that we had made progress on reducing biased model outputs. When we shared our results internally, one of the main BBQ developers (who works at Anthropic) asked if we had checked a simple control to verify whether our models were answering questions at all. We found that they weren't—our results were technically unbiased, but they were also completely useless. All evaluations are subject to the failure mode where you overinterpret the quantitative score and delude yourself into thinking that you have made progress when you haven’t.

One size doesn't fit all when it comes to third-party evaluation frameworksRecently, third-parties have been actively developing evaluation suites that can be run on a broad set of models. So far, we have participated in two of these efforts: BIG-bench and Stanford’s Holistic Evaluation of Language Models (HELM). Despite how useful third party evaluations intuitively seem (ideally, they are independent, neutral, and open), both of these projects revealed new challenges.

BIG-bench: The bottom-up approach to sourcing a variety of evaluations

BIG-bench consists of 204 evaluations, contributed by over 450 authors, that span a range of topics from science to social reasoning. We encountered several challenges using this framework:

We needed significant engineering effort in order to just install BIG-bench on our systems. BIG-bench is not "plug and play" in the way MMLU is—it requires even more effort than BBQ to implement.BIG-bench did not scale efficiently; it was challenging to run all 204 evaluations on our models within a reasonable time frame. To speed up BIG-bench would require re-writing it in order to play well with our infrastructure—a significant engineering effort.During implementation, we found bugs in some of the evaluations, notably in the implementation of BBQ-lite, a variant of BBQ. Only after discovering this bug did we realize that we had to spend even more time and energy implementing BBQ ourselves.Determining which tasks were most important and representative would have required running all 204 tasks, validating results, and extensively analyzing output—a substantial research undertaking, even for an organization with significant engineering resources.2While it was a useful exercise to try to implement BIG-Bench, we found it sufficiently unwieldy that we dropped it after this experiment.

HELM: The top-down approach to curating an opinionated set of evaluations

BIG-bench is a "bottom-up" effort where anyone can submit any task with some limited vetting by a group of expert organizers. HELM, on the other hand, takes an opinionated "top-down" approach where experts decide what tasks to evaluate models on. HELM evaluates models across scenarios like reasoning and disinformation using standardized metrics like accuracy, calibration, robustness, and fairness. We provide API access to HELM's developers to run the benchmark on our models. This solves two issues we had with BIG-bench: 1) it requires no significant engineering effort from us, and 2) we can rely on experts to select and interpret specific high quality evaluations.

However, HELM introduces its own challenges. Methods that work well for evaluating other providers’ models do not necessarily work well for our models, and vice versa. For example, Anthropic’s Claude series of models are trained to adhere to a specific text format, which we call the Human/Assistant format. When we internally evaluate our models, we adhere to this specific format. If we do not adhere to this format, sometimes Claude will give uncharacteristic responses, making standardized evaluation metrics less trustworthy. Because HELM needs to maintain consistency with how it prompts other models, it does not use the Human/Assistant format when evaluating our models. This means that HELM gives a misleading impression of Claude’s performance.

Furthermore, HELM iteration time is slow—it can take months to evaluate new models. This makes sense because it is a volunteer engineering effort led by a research university. Faster turnarounds would help us to more quickly understand our models as they rapidly evolve. Finally, HELM requires coordination and communication with external parties. This effort requires time and patience, and both sides are likely understaffed and juggling other demands, which can add to the slow iteration times.

The subjectivity of human evaluationsSo far, we have only discussed evaluations that are similar to simple multiple choice tests; however, AI systems are designed for open-ended dynamic interactions with people. How can we design evaluations that more closely approximate how these models are used in the real world?

A/B tests with crowdworkers

Currently, we mainly (but not entirely) rely on one basic type of human evaluation: we conduct A/B tests on crowdsourcing or contracting platforms where people engage in open-ended dialogue with two models and select the response from Model A or B that is more helpful or harmless. In the case of harmlessness, we encourage crowdworkers to actively red team our models, or to adversarially probe them for harmful outputs. We use the resulting data to rank our models according to how helpful or harmless they are. This approach to evaluation has the benefits of corresponding to a realistic setting (e.g., a conversation vs. a multiple choice exam) and allowing us to rank different models against one another.

However, there are a few limitations of this evaluation method:

These experiments are expensive and time-consuming to run. We need to work with and pay for third-party crowdworker platforms or contractors, build custom web interfaces to our models, design careful instructions for A/B testers, analyze and store the resulting data, and address the myriad known ethical challenges of employing crowdworkers3. In the case of harmlessness testing, our experiments additionally risk exposing people to harmful outputs.Human evaluations can vary significantly depending on the characteristics of the human evaluators. Key factors that may influence someone's assessment include their level of creativity, motivation, and ability to identify potential flaws or issues with the system being tested.There is an inherent tension between helpfulness and harmlessness. A system could avoid harm simply by providing unhelpful responses like "sorry, I can’t help you with that". What is the right balance between helpfulness and harmlessness? What numerical value indicates a model is sufficiently helpful and harmless? What high-level norms or values should we test for above and beyond helpfulness and harmlessness?More work is needed to further the science of human evaluations.

Red teaming for harms related to national security

Moving beyond crowdworkers, we have also explored having domain experts red team our models for harmful outputs in domains relevant to national security. The goal is determining whether and how AI models could create or exacerbate national security risks. We recently piloted a more systematic approach to red teaming models for such risks, which we call frontier threats red teaming.

Frontier threats red teaming involves working with subject matter experts to define high-priority threat models, having experts extensively probe the model to assess whether the system could create or exacerbate national security risks per predefined threat models, and developing repeatable quantitative evaluations and mitigations.

Our early work on frontier threats red teaming revealed additional challenges:

The unique characteristics of the national security context make evaluating models for such threats more challenging than evaluating for other types of harms. National security risks are complicated, require expert and very sensitive knowledge, and depend on real-world scenarios of how actors might cause harm.Red teaming AI systems is presently more art than science; red teamers attempt to elicit concerning behaviors by probing models, but this process is not yet standardized. A robust and repeatable process is critical to ensure that red teaming accurately reflects model capabilities and establishes a shared baseline on which different models can be meaningfully compared.We have found that in certain cases, it is critical to involve red teamers with security clearances due to the nature of the information involved. However, this may limit what information red teamers can share with AI developers outside of classified environments. This could, in turn, limit AI developers’ ability to fully understand and mitigate threats identified by domain experts.Red teaming for certain national security harms could have legal ramifications if a model outputs controlled information. There are open questions around constructing appropriate legal safe harbors to enable red teaming without incurring unintended legal risks.Going forward, red teaming for harms related to national security will require coordination across stakeholders to develop standardized processes, legal safeguards, and secure information sharing protocols that enable us to test these systems without compromising sensitive information.

The ouroboros of model-generated evaluationsAs models start to achieve human-level capabilities, we can also employ them to evaluate themselves. So far, we have found success in using models to generate novel multiple choice evaluations that allow us to screen for a large and diverse variety of troubling behaviors. Using this approach, our AI systems generate evaluations in minutes, compared to the days or months that it takes to develop human-generated evaluations.

However, model-generated evaluations have their own challenges. These include:

We currently rely on humans to verify the accuracy of model-generated evaluations. This inherits all of the challenges of human evaluations described above.We know from our experience with BIG-bench, HELM, BBQ, etc., that our models can contain social biases and can fabricate information. As such, any model-generated evaluation may inherit these undesirable characteristics, which could skew the results in hard-to-disentangle ways.As a counter-example, consider Constitutional AI (CAI), a method in which we replace human red teaming with model-based red teaming in order to train Claude to be more harmless. Despite the usage of models to red team models, we surprisingly find that humans find CAI models to be more harmless than models that were red teamed in advance by humans. Although this is promising, model-generated evaluations remain complicated and deserve deeper study.

Preserving the objectivity of third-party audits while leveraging internal expertiseThe thing that differentiates third-party audits from third-party evaluations is that audits are deeper independent assessments focused on risks, while evaluations take a broader look at capabilities. We have participated in third-party safety evaluations conducted by the Alignment Research Center (ARC), which assesses frontier AI models for dangerous capabilities (e.g., the ability for a model to accumulate resources, make copies of itself, become hard to shut down, etc.). Engaging external experts has the advantage of leveraging specialized domain expertise and increasing the likelihood of an unbiased audit. Initially, we expected this collaboration to be straightforward, but it ended up requiring significant science and engineering support on our end. Providing full-time assistance diverted resources from internal evaluation efforts.

When doing this audit, we realized that the relationship between auditors and those being audited poses challenges that must be navigated carefully. Auditors typically limit details shared with auditees to preserve evaluation integrity. However, without adequate information the evaluated party may struggle to address underlying issues when crafting technical evaluations. In this case, after seeing the final audit report, we realized that we could have helped ARC be more successful in identifying concerning behavior if we had known more details about their (clever and well-designed) audit approach. This is because getting models to perform near the limits of their capabilities is a fundamentally difficult research endeavor. Prompt engineering and fine-tuning language models are active research areas, with most expertise residing within AI companies. With more collaboration, we could have leveraged our deep technical knowledge of our models to help ARC execute the evaluation more effectively.

Policy recommendationsAs we have explored in this post, building meaningful AI evaluations is a challenging enterprise. To advance the science and engineering of evaluations, we propose that policymakers:

Fund and support:

The science of repeatable and useful evaluations. Today there are many open questions about what makes an evaluation useful and high-quality. Governments should fund grants and research programs targeted at computer science departments and organizations dedicated to the development of AI evaluations to study what constitutes a high-quality evaluation and develop robust, replicable methodologies that enable comparative assessments of AI systems. We recommend that governments opt for quality over quantity (i.e., funding the development of one higher-quality evaluation rather than ten lower-quality evaluations).The implementation of existing evaluations. While continually developing new evaluations is useful, enabling the implementation of existing, high-quality evaluations by multiple parties is also important. For example, governments can fund engineering efforts to create easy-to-install and -run software packages for evaluations like BIG-bench and develop version-controlled and standardized dynamic benchmarks that are easy to implement.Analyzing the robustness of existing evaluations. After evaluations are implemented, they require constant monitoring (e.g., to determine whether an evaluation is saturated and no longer useful).Increase funding for government agencies focused on evaluations, such as the National Institute of Standards and Technology (NIST) in the United States. Policymakers should also encourage behavioral norms via a public "AI safety leaderboard" to incentivize private companies performing well on evaluations. This could function similarly to NIST’s Face Recognition Vendor Test (FRVT), which was created to provide independent evaluations of commercially available facial recognition systems. By publishing performance benchmarks, it allowed consumers and regulators to better understand the capabilities and limitations4 of different systems.

Create a legal safe harbor allowing companies to work with governments and third-parties to rigorously evaluate models for national security risks—such as those in the chemical, biological, radiological and nuclear defense (CBRN) domains—without legal repercussions, in the interest of improving safety. This could also include a "responsible disclosure protocol" that enables labs to share sensitive information about identified risks.

ConclusionWe hope that by openly sharing our experiences evaluating our own systems across many different dimensions, we can help people interested in AI policy acknowledge challenges with current model evaluations.</document>

First, find the quotes from the document that are most relevant to answering the question, and then print them in numbered order. Quotes should be relatively short.

If there are no relevant quotes, write "No relevant quotes" instead.

Then, answer the question, starting with "Answer:". Do not include or reference quoted content verbatim in the answer. Don't say "According to Quote [1]" when answering. Instead make references to quotes relevant to each section of the answer solely by adding their bracketed numbers at the end of relevant sentences.

Thus, the format of your overall response should look like what's shown between the <example></example> tags. Make sure to follow the formatting and spacing exactly.

<example>Relevant quotes:[1] "Company X reported revenue of $12 million in 2021."[2] "Almost 90% of revene came from widget sales, with gadget sales making up the remaining 10%."

Answer:Company X earned $12 million. [1] Almost 90% of it was from widget sales. [2]</example>

Here is the first question: In bullet points and simple terms, what are the key challenges in evaluating AI systems?

If the question cannot be answered by the document, say so.

Answer the question immediately without preamble.Use "chain of thought" para incentivar o raciocínio lógico

Essa técnica leva o modelo a verificar o próprio trabalho ou a apresentar evidências do porquê chegou àquela resposta. Chain of thought é uma ferramenta muito útil para reduzir alucinações. Alucinações são conteúdos gerados pelo modelo que são falsos, imprecisos ou totalmente inventados. Pela natureza probabilística do modelo, o conteúdo gerado pode soar verdadeiro, mas, na prática, ser uma alucinação.

Como mostra o exemplo, incluímos a tarefa "Double check your work to ensure no errors or inconsistencies". Isso ajuda o modelo a entregar respostas mais precisas. Mas não é uma abordagem infalível: como já vimos, esses modelos são probabilísticos e, em alguns casos, podem afirmar que não há erros quando, na verdade, há, ou apresentar evidências sem nenhuma relação com a resposta dada anteriormente.

Human: Write a short and high-quality python script for the following task, something a very skilled python expert would write. You are writing code for an experienced developer so only add comments for things that are non-obvious. Make sure to include any imports required.

NEVER write anything before the ```python``` block. After you are done generating the code and after the ```python``` block, check your work carefully to make sure there are no mistakes, errors, or inconsistencies. If there are errors, list those errors in <error> tags, then generate a new version with those errors fixed. If there are no errors, write "CHECKED: NO ERRORS" in <error> tags.

Here is the task:<task>A web scraper that extracts data from multiple pages and stores results in a SQLite database. Double check your work to ensure no errors or inconsistencies.</task>Veja outro exemplo, agora para um modelo Llama2. Note a solicitação para fornecer informações passo a passo.

[INST]You are a a very intelligent bot with exceptional critical thinking[/INST]I went to the market and bought 10 apples. I gave 2 apples to your friend and 2 to the helper. I then went and bought 5 more apples and ate 1. How many apples did I remain with?

Let's think step by step.A AWS oferece um conjunto robusto de serviços para criar aplicações com LLMs sem precisar treinar modelos do zero. Na DoiT, ajudamos você a aproveitar esses serviços e ferramentas em todo o seu potencial.

Amazon Bedrock

O Amazon Bedrock é um serviço totalmente gerenciado, criado para simplificar o desenvolvimento e o escalonamento de aplicações de IA generativa. Ele dá acesso a uma variedade de foundation models de alto desempenho, oferecidos por empresas líderes em IA, como AI21 Labs, Anthropic, Cohere, Meta, Mistral AI, Stability AI e Amazon, tudo via uma única API.

- Escolha e personalização de modelos: os usuários podem selecionar, entre vários foundation models, aquele que melhor atende às suas necessidades. Além disso, os modelos podem ser personalizados de forma privada com dados próprios, via fine-tuning e Retrieval Augmented Generation (RAG), o que permite criar aplicações personalizadas e específicas para cada domínio.

- Experiência serverless: o Amazon Bedrock oferece uma arquitetura serverless, ou seja, os usuários não precisam gerenciar nenhuma infraestrutura. Isso facilita a integração e a implantação de recursos de IA generativa nas aplicações usando ferramentas conhecidas da AWS.

- Segurança e privacidade: criar aplicações de IA generativa com o Bedrock garante segurança, privacidade e aderência aos princípios de IA responsável. Os usuários conseguem manter seus dados privados e seguros enquanto aproveitam esses recursos avançados de IA.

- Amazon Bedrock Knowledge Base: permite que arquitetos potencializem aplicações de IA generativa com técnicas de retrieval augmented generation (RAG). Possibilita reunir diversas fontes de dados em um repositório central, dando aos foundation models e agentes acesso a informações atualizadas e proprietárias para entregar respostas mais contextuais e precisas. Esse recurso totalmente gerenciado simplifica o fluxo RAG, da ingestão de dados ao prompt augmentation, eliminando a necessidade de integrações personalizadas com fontes de dados. Suporta integração transparente com bancos de armazenamento vetorial conhecidos, como Amazon OpenSearch Serverless, Pinecone e Redis Enterprise Cloud, facilitando a implementação de RAG sem as complicações de gerenciar a infraestrutura subjacente.

- Agents for Amazon Bedrock: permitem que arquitetos criem e configurem agentes autônomos dentro das aplicações, ajudando os usuários finais a executar ações com base em dados organizacionais e na entrada do usuário. Esses agentes orquestram interações entre foundation models, fontes de dados, aplicações de software e conversas com usuários. Eles chamam APIs automaticamente e podem invocar bases de conhecimento para enriquecer informações para ações, reduzindo significativamente o esforço de desenvolvimento ao cuidar de tarefas como prompt engineering, gerenciamento de memória, monitoramento e criptografia.

Sagemaker Jumpstart

O Amazon SageMaker JumpStart é um hub de machine learning dentro do Amazon SageMaker, que dá acesso a modelos pré-treinados e templates de soluções para uma ampla variedade de problemas. Ele permite treinamento incremental, ajuste antes da implantação e simplifica o processo de implantar, fazer fine-tuning e avaliar modelos pré-treinados de hubs populares. Isso acelera a jornada de machine learning ao oferecer soluções e exemplos prontos para aprendizado e desenvolvimento de aplicações.

Amazon CodeWhisperer

O AWS CodeWhisperer é um gerador de código baseado em machine learning que oferece recomendações e sugestões em tempo real, que vão de comentários de uma única linha a funções completas, com base nas suas entradas atuais e anteriores.

Amazon Q

O Amazon Q é um assistente baseado em IA generativa, criado para atender a necessidades de negócios e permitir que os usuários o adaptem para aplicações específicas. Ele facilita a criação, extensão, operação e o entendimento de aplicações e workloads na AWS, sendo uma ferramenta versátil para diversas tarefas organizacionais.