Kubernetes has become the go-to platform for container orchestration, making it easier for organizations to deploy, scale, and manage containerized apps in the cloud. But with this power comes complexity—and often, surprise costs stemming from underutilized nodes, poorly tuned vertical pod autoscaling, inefficient requests/limits settings, or long-running idle workloads. Kubernetes cost observability is fragmented, and cost visibility is often not natively supported across clusters or namespaces without additional tooling.

Organizations can find their Kubernetes expenses spiraling without a clear optimization strategy. High Kubernetes costs typically stem from specific architectural and operational issues. These include overprovisioned node pools that exceed actual workload demands, inefficient bin-packing of pods across nodes that leaves valuable compute capacity unused, and a lack of autoscaling mechanisms (including Horizontal Pod Autoscaler, Vertical Pod Autoscaler, or Cluster Autoscaler) that would dynamically adjust resources based on usage.

That’s why being proactive and taking a close look at Kubernetes spending can help control costs and maximize ROI. This article shares practical tips and tools to help teams take charge of Kubernetes workloads and spending while keeping operations running smoothly.

Quick answers: Kubernetes cost optimization

- What is Kubernetes cost optimization?

- Kubernetes cost optimization is the practice of reducing cluster spend by improving resource efficiency, right-sizing requests and limits, scaling nodes and pods dynamically, and eliminating idle infrastructure.

- What causes high Kubernetes costs?

- The most common causes are overprovisioned node pools, inflated pod requests, poor bin-packing, unused storage, idle clusters, and unexpected network egress.

- How do you reduce Kubernetes costs quickly?

- Start by fixing requests/limits, cleaning idle resources, scheduling non-prod clusters, enabling autoscaling, and using discounted compute (spot/preemptible) for resilient workloads.

What factors make Kubernetes cost optimization necessary?

Kubernetes environments can become significant cost centers without proper management. Several factors contribute to these expenses:

Compute resource inefficiency often tops the list of cost concerns. Without rightsizing, many organizations overprovision CPU and memory resources to avoid performance issues, resulting in low utilization rates and wasted cloud resources. Research shows that over 65% of Kubernetes workloads use less than half of their requested CPU and memory. This happens mostly because resource requests are overprovisioned relative to limits and actual usage. Kubernetes schedulers rely on resource requests—not actual usage—for pod placement decisions. When requests are inflated, it leads to poor bin-packing and unnecessarily high node counts.

Storage costs can accumulate rapidly in Kubernetes cloud environments. Persistent volumes (especially premium storage classes) can become significant as application data grows. Additional contributors include retained PVCs after pod deletion and excessive snapshot/backup retention. Optimizing storage often requires coordination between infrastructure and application teams, especially for stateful workloads where data decisions impact performance and spending.

Network expenses are another cost factor, particularly in multi-region or hybrid deployments. Data transfer between zones, regions, and external services can lead to significant charges that teams often overlook during initial architecture planning.

Idle resources are also a major source of waste. Development and testing clusters running after hours, leftover persistent volumes, and unused load balancers can all contribute to unnecessary costs. Without governance, these resources add up and drive up total Kubernetes spend.

Key strategies for lowering your Kubernetes costs

Understanding how these factors work together is key to setting up a cost-effective Kubernetes architecture and improving cost efficiency. Here are strategies you can implement to lower Kubernetes costs.

1. Rightsize node resources

Rightsizing is one of the most impactful strategies for reducing Kubernetes expenses. Kubernetes scheduling is driven by requested resources, so inflated requests often force higher node counts even when actual usage is low.

Start by analyzing historical utilization across clusters. Identify pods consistently using significantly less CPU or memory than requested. Many organizations can reduce requests without impacting performance, allowing more workloads to run on existing nodes.

Set resource quotas at the namespace level to prevent runaway usage and create clear boundaries for teams. Quotas also help reduce unexpected cost spikes that force unnecessary scaling.

Enforce requests/limits policies. Implement policy enforcement tools (such as Gatekeeper) to ensure every workload specifies both. Requests determine scheduling and affect compute costs, while limits prevent noisy neighbors and workload instability. Without proper requests, Kubernetes cannot bin-pack efficiently, leading to underutilized nodes and wasted spend.

Consider using Vertical Pod Autoscaler (VPA) in recommendation mode first. This provides safe guidance based on usage patterns before moving to automation. Combine VPA with Horizontal Pod Autoscaler (HPA) where appropriate, but note they can conflict when both target the same CPU/memory signals, so careful tuning is required.

2. Use spot instances

Spot instances (or preemptible VMs in Google Cloud) can significantly reduce compute costs by using spare capacity. They can be reclaimed with minimal notice, so workloads must tolerate interruptions.

Use pod disruption budgets, taints/tolerations, and node affinity rules to safely run eligible workloads on spot nodes. Prioritize stateless and fault-tolerant workloads such as batch processing and horizontally scalable services.

For production, a hybrid approach (spot plus on-demand baseline capacity) helps balance savings and reliability.

3. Deploy automated governance

Automated governance provides ongoing cost control through policy-as-code enforcement and runtime controls across the Kubernetes lifecycle.

- Require requests and limits for all workloads

- Enforce storage class usage based on workload requirements

- Mandate labels for cost allocation and ownership

- Automatically shut down or scale down development environments during off-hours

Tools like DoiT’s CloudFlow can help enforce policies, prevent noncompliant resources from being created, and maintain cost discipline across teams.

4. Use granular monitoring

Cost optimization requires visibility into consumption patterns. Effective monitoring typically includes:

- Pod-level utilization metrics

- Node efficiency statistics

- Namespace- and label-based cost allocation

- Historical trending and anomaly detection

This granularity helps identify optimization opportunities and attribute costs to teams, projects, or applications. Many environments struggle with incomplete labeling, which reduces accuracy. Native cloud billing tools often lack Kubernetes-specific detail, so Kubernetes-aware tooling is frequently required.

The most effective monitoring solutions integrate into existing observability stacks while providing Kubernetes cost insights (allocation, efficiency, and recommendations).

5. Apply pod-scaling techniques

Pod scaling complements rightsizing and improves utilization. Use HPA based on metrics that reflect real workload demand (often queue depth, latency, or throughput rather than CPU alone). Not all workloads benefit from HPA—stateful services and slow-start apps may need alternative approaches.

Configure Cluster Autoscaler with care so node scale-up and scale-down behavior matches workload realities (warm-up time, scheduling constraints, and disruption requirements).

For predictable patterns, scheduled scaling can preemptively adjust capacity before demand changes, especially for business-hour or batch-processing workloads.

Why Kubernetes cost optimization matters

Beyond obvious financial benefits, Kubernetes cost optimization delivers strategic advantages. Efficient utilization reduces contention and supports more predictable scaling. It can also improve sustainability through lower energy consumption.

Risk mitigation is another critical advantage. Rightsizing and proper allocation reduce the chance of quota overruns and resource starvation, especially in shared environments. This helps prevent CPU throttling, OOMKills, and unschedulable pods caused by exhausted cluster capacity.

Well-optimized Kubernetes environments can also support faster delivery. When teams understand resource needs precisely, they can design cleaner CI/CD pipelines, reduce uncertainty, and simplify approvals—all contributing to business agility.

Perhaps most importantly, keeping Kubernetes costs under control improves forecasting. Finance teams gain confidence through unit economics and predictable chargeback/showback models, while engineering teams gain freedom to innovate without triggering unexpected spend.

Potential obstacles to lowering your Kubernetes costs

Organizations often encounter challenges when optimizing Kubernetes costs. Technical complexity can be a barrier, since cost optimization requires expertise in scheduling behavior, autoscaling, and cost observability.

Cross-team coordination can also be difficult. Effective optimization requires collaboration between finance, engineering, and operations teams that may have different incentives.

Resistance to change may emerge when teams fear optimization will reduce reliability. Education is essential: good cost optimization often improves reliability by preventing noisy-neighbor issues and resource contention.

Cost visibility is a persistent challenge because Kubernetes doesn’t include built-in cost tracking by default. Many organizations deploy clusters without planning for cost attribution, then struggle to stitch together metrics, labels, and billing data after the fact. Integrating cost signals into daily workflows helps teams make cost-conscious choices from day one.

Finally, many organizations struggle with sustainability. One-time efforts can create savings, but without ongoing governance and accountability, costs often creep back up.

Tools for Kubernetes cost optimization and monitoring

Effective Kubernetes cost optimization requires tools that monitor, analyze, and help manage resource usage.

1. DoiT

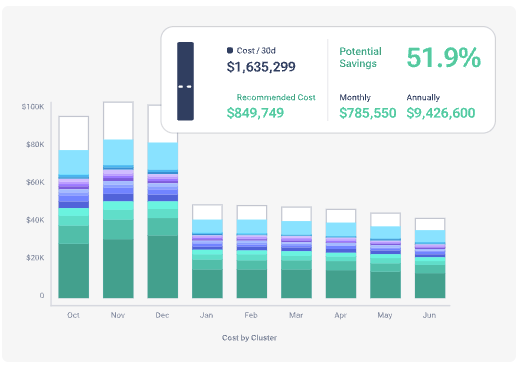

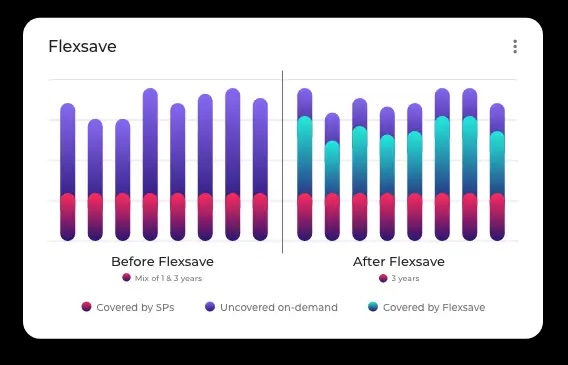

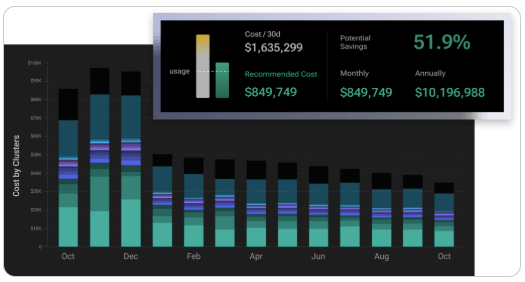

DoiT provides Kubernetes cost optimization capabilities through its platform. Flexsave for Kubernetes supports intelligent optimization that reduces costs while maintaining performance and availability.

The platform analyzes consumption patterns and delivers Kubernetes-specific recommendations and automation, supporting better alignment between finance and engineering teams.

Trax, for instance, saved 75% on Kubernetes spend with PerfectScale by DoiT. DoiT also helps correlate Kubernetes spending with business outcomes and unit economics.

2. AWS Cost Explorer

For Kubernetes on AWS (including EKS), AWS Cost Explorer provides visibility into container-related spending when tagging and allocation are configured correctly.

While not Kubernetes-native, Cost Explorer can identify trends and anomalies in EKS spend. Combined with AWS Compute Optimizer, it can support instance rightsizing for node groups.

3. Azure Cost Management + Billing

Azure Cost Management supports AKS cost tracking via tagging, grouping, and budgeting. Container insights continue to improve, but recommendations and allocation often require extra configuration and disciplined labeling.

4. GKE Usage Metering

Google Cloud supports namespace-level attribution via GKE usage metering, which provides more granular visibility than standard billing reports.

When paired with Google Cloud recommendations, it can help identify optimization opportunities based on usage patterns.

FinOps-style KPIs for Kubernetes cost control

To sustain cost optimization, track KPIs that connect technical decisions to spend:

- Cluster efficiency: requested vs. used CPU/memory across namespaces

- Workload efficiency: request/limit alignment and throttling/OOM events

- Idle waste: unused nodes, empty namespaces, orphaned PVCs and load balancers

- Autoscaling effectiveness: scale events vs. SLO impact

- Unit cost: cost per request, job, customer, or workload output

Kubernetes cost optimization FAQ

How do I reduce Kubernetes costs without hurting performance?

Start with measurement: rightsizing requests based on real usage, enabling autoscaling, and removing idle resources. Use gradual changes (VPA recommendation mode, staged rollouts) and track SLOs to ensure performance stays stable.

What is the fastest way to lower Kubernetes costs?

The fastest wins usually come from fixing inflated requests, shutting down idle non-production clusters, removing orphaned storage, and implementing cluster autoscaling with sensible constraints.

What tools help with Kubernetes cost allocation?

Kubernetes-aware tools that use namespace and label allocation help most. Cloud billing tools can help only when your tagging/labeling strategy is consistent and integrated with cluster metadata.

Are spot instances safe for Kubernetes?

Yes, for interruption-tolerant workloads. Use pod disruption budgets, node taints/tolerations, graceful termination handling, and a baseline of on-demand nodes for critical services.

Next steps for sustainable Kubernetes spending

Implementing sustainable optimization requires a structured approach. Start by baselining current Kubernetes spend by cluster, namespace, and application. This baseline makes progress measurable.

Prioritize quick wins such as cleaning idle resources and enforcing basic governance policies. Early momentum helps teams adopt more advanced optimizations like autoscaling tuning and workload-specific efficiency improvements.

Form a cross-functional cost optimization group with finance, engineering, and operations stakeholders. Collaboration ensures technical decisions align with financial goals without sacrificing reliability.

Finally, establish recurring review cycles. Kubernetes environments evolve continuously, so cost optimization must be ongoing rather than a one-time project.

Want to go deeper? Download our FinOps Guide to Kubernetes Costs and Complexity to learn how to budget strategically for scalable operations in Kubernetes.