Generative AI is rapidly moving from experimentation to execution. But while building a GenAI prototype has become easier than ever, scaling GenAI projects sustainably remains a major challenge. The teams that succeed aren’t just focused on model performance — they’re focused on business impact, cost control, and repeatable ROI.

Without the right foundation, GenAI initiatives can quickly stall in pilot mode or drive unpredictable cloud spend without clear outcomes. This guide outlines a proven framework for scaling GenAI projects without blowing the budget, grounded in real-world lessons from enterprise deployments across industries.

Why Generative AI ROI Breaks Down at Scale

Many GenAI projects don’t fail because the technology doesn’t work.

They fail because technical success is not the same as business success.

A solution can generate impressive outputs and still deliver zero ROI if:

- the problem isn’t tied to measurable outcomes

- the scope is too broad

- costs aren’t tracked early

- adoption is low

- scaling introduces unpredictable spend

To maximize generative AI ROI, organizations need to treat ROI as a design constraint from the start — not something measured after deployment.

Step 1: Choose the Right GenAI Use Case for ROI

The highest-return GenAI projects are rarely the flashiest. They tend to solve problems that are:

- repeatable

- measurable

- operationally important

- low-risk to pilot

- easy to evaluate

A useful filter is the SMART framework:

- Specific: What task is changing?

- Measurable: What improves?

- Achievable: Can GenAI reliably support it?

- Relevant: Does it map to real business impact?

- Time-bound: When will success be evaluated?

Avoid Starting Too Broad

A common mistake is beginning with vague goals like: “Build an AI assistant to improve productivity across the organization.” This sounds compelling, but it’s difficult to measure, constrain, or scale.

Why Internal-First GenAI Projects Often Deliver Faster ROI

Many organizations see early success by starting internally, where:

- error risk is lower

- feedback loops are faster

- workflows are well-defined

- cost savings are easier to quantify

Internal GenAI workloads are often the most reliable foundation before expanding outward.

Step 2: Quantify ROI Before You Build

Scaling GenAI projects requires more than excitement — it requires metrics. Before writing code, teams should establish baseline data:

- How often does this workflow occur?

- How long does it take today?

- What does it cost in time and effort?

- What is the current error rate?

- What happens if AI is wrong?

A Simple Starting ROI Model

Monthly Opportunity = (Volume × Cost per Task) − AI Operating Cost

Even directional estimates help teams justify investment and prioritize high-return projects.

No Baseline Yet? Start Small

If there’s no historical measurement, begin with a narrow pilot designed to collect:

- time savings

- adoption rate

- error tolerance thresholds

- cost-per-outcome signals

Measurement is what turns a GenAI experiment into a scalable business initiative.

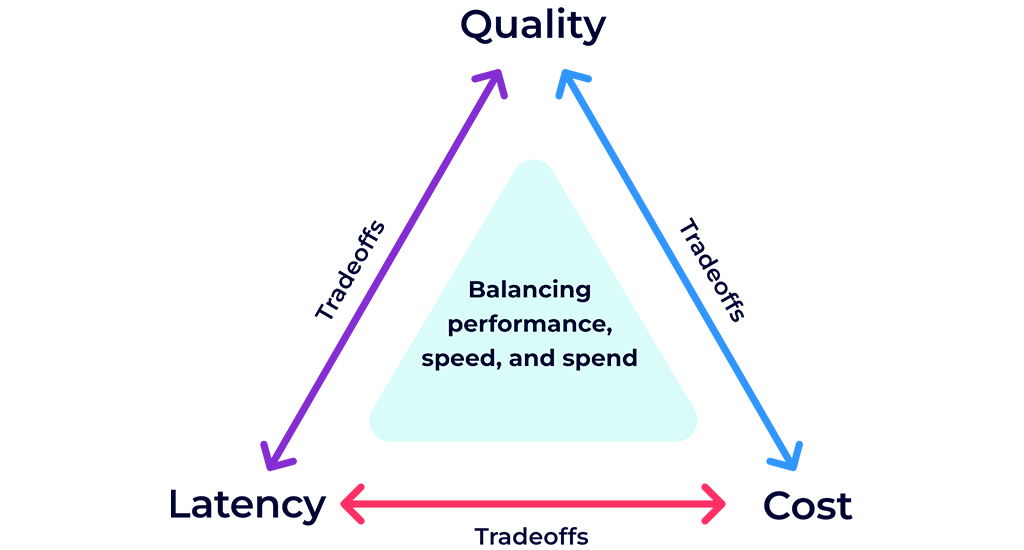

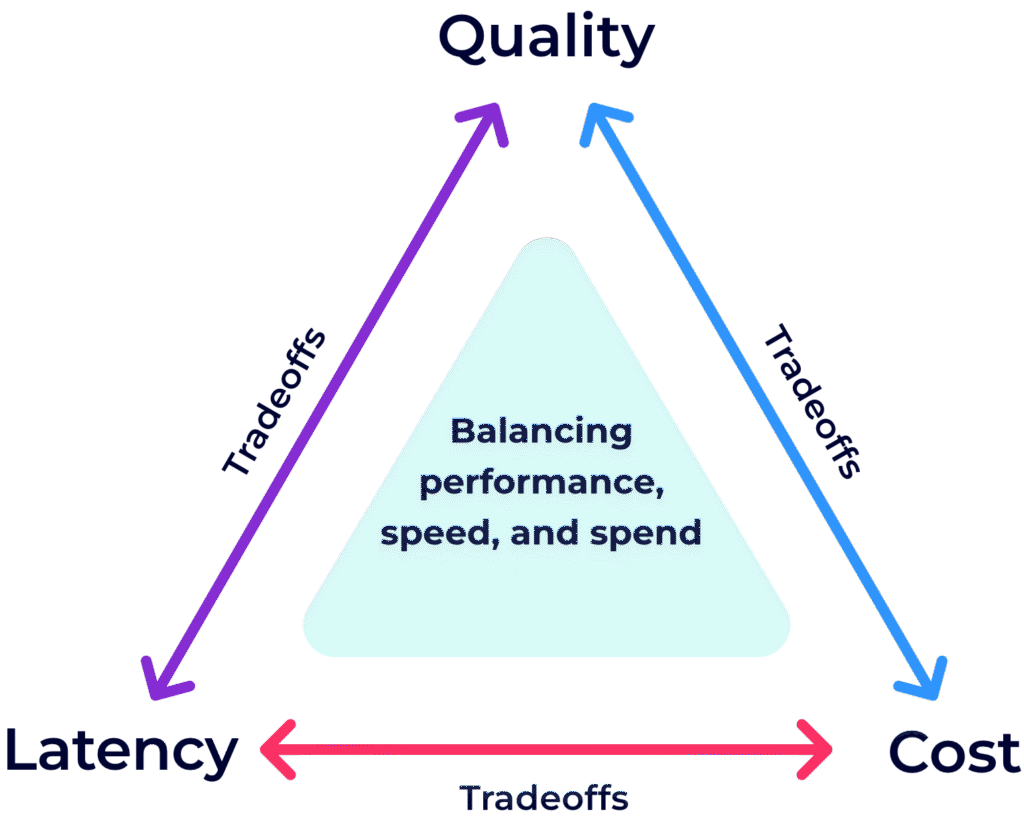

Step 3: Balance Cost, Latency, and Quality

Every scalable GenAI system faces an unavoidable trade-off triangle:

- Cost (token usage, model choice, infrastructure)

- Latency (speed and user experience)

- Quality (accuracy, safety, reliability)

Optimizing one often increases pressure on the others.

Practical Implications for GenAI Cost Optimization

- More context increases cost and response time

- More safeguards often require additional model calls

- Faster responses may reduce depth or completeness

- Perfection is rarely cost-effective at scale

The key is asking: Which factor matters most for this workload — and what trade-offs are acceptable?

Step 4: Treat FinOps as a Requirement for AI Workloads

Generative AI costs are probabilistic. Small changes in prompts, retrieval, or workflow design can dramatically affect spend.

That’s why FinOps for AI workloads must be built in early — not added later.

Organizations should track cost drivers by:

- project

- team

- user

- model

- token volume

- provider

Tagging and allocation are foundational. Without attribution, optimization is impossible.

The Hidden Cost Lever: Context Discipline

The fastest path to GenAI cost optimization is often reducing unnecessary context:

- retrieve only what’s needed

- summarize upstream

- avoid dumping full documents into prompts

- minimize redundant multi-call chains

Cost control comes from precision, not volume.

Step 5: Scale GenAI Projects Gradually (POC → Beta → Production)

Scaling is not a switch. It’s a rollout discipline.

Proof of Concept (POC)

- validate feasibility

- define success criteria

- measure cost per outcome

Beta Deployment

- start with trusted internal teams

- encourage feedback and edge-case testing

- refine guardrails

Soft Launch and Scaling

- monitor spend against projections

- validate adoption and performance

- ensure production observability

- expand only when unit economics are proven

A key discipline: stop iterating once success criteria are met. Scaling requires momentum, not perfection.

A Technical Principle: Retrieval Beats Massive Context

When GenAI systems need access to large internal datasets, the scalable pattern is:

- retrieval (RAG)

- structured queries

- scoped access

- least-privilege permissions

Putting entire databases or documents into the context window increases:

- token costs

- latency

- risk

- unpredictability

Efficient retrieval is essential for long-term ROI.

Frequently Asked Questions About Scaling GenAI ROI

How do you measure ROI for generative AI projects?

Start with baseline workflow cost and time, then measure improvements in speed, volume handled, and cost-per-outcome after deployment.

What is FinOps for AI workloads?

FinOps for AI applies cost attribution, tagging, and spend governance to token-based GenAI systems so organizations can scale predictably.

How can organizations reduce GenAI operating costs?

The highest-impact levers are monitoring token usage, reducing unnecessary context, selecting appropriate models, and optimizing retrieval workflows.

Sustainable GenAI ROI Requires Discipline

Scaling GenAI projects without overspending comes down to:

- choosing measurable, high-impact problems

- quantifying ROI early

- balancing cost, latency, and quality

- building FinOps governance from day one

- iterating in controlled stages before scaling

When done correctly, GenAI becomes a durable business lever — not an expensive experiment.